Berkeley and Sciences Po Dual Degree Program

Admission to the program is highly competitive. Students must be approved for admission by both UC Berkeley and Sciences Po to be considered for the Dual Degree Program. Students are evaluated according to the following criteria: academic achievement, perceived intellectual readiness, and the applicant's own representation of their experience, ideas, and aspirations.

Students are admitted to the program as Freshmen (first-year students). To apply to the Dual Degree, students must complete the standard University of California application for UC Berkeley , AND the Sciences Po–UC Berkeley Supplemental Application . Complete information on applying to UC Berkeley can be found through the Office of Undergraduate Admissions .

Candidates must meet UC Berkeley and Sciences Po admissions requirements, including English proficiency requirements. Applicants deemed eligible will be invited to an interview before a panel of representatives from Sciences Po and UC Berkeley.

Note: By applying to the program, you have also applied to UC Berkeley as a 4-year degree-seeking student. It is possible to gain admission to UC Berkeley and be denied admission to the Dual Degree Program.

| August 1 | UC Application opens |

|---|---|

| September 1 | Sciences Po Supplemental Application opens |

| October 1 | Submission opens for both applications (UC Application must be submitted first) |

| November 30 | Deadline to submit both applications |

| December | Deadline to take English proficiency tests (if required) |

| Mid-January | Notification of interview request |

| January-February | Interviews take place |

| February | Admissions decisions announced! |

| May 1* | Deadline to accept offer of admission *Deadline extended to May 15 |

Applications

Applications for 2024 admission are now closed. Fall 2025 admission applications will open in September 2024.

Application Links

*You will be prompted to create an account through UC Berkeley before you can access the Sciences Po Supplemental Application. This account is not linked to the account you will create for the University of California application.

UC Berkeley Application

Students apply directly to the University of California and select Berkeley as a campus . Students must meet all of the guidelines to apply based on their residency, whether California resident, out of state, or international student. Please see the Freshman Admission Page for more information.

For information on the Personal Insight Questions, see this Guide for Freshman Applicants .

Please note that SAT/ACT test scores are not used for any part of the Dual Degree admission process, including evaluation/holistic review, selection, or scholarship processes. For more information, please visit this University of California admissions page .

Sciences Po Supplemental Application

Supplemental application essay.

In 750 to 1,000 words, describe your motivations for applying to the Dual Degree program between Sciences Po and Berkeley and why you chose the specific Sciences Po campus and/or program of study (Le Havre, Menton, or Reims).

Explain how and why the multidisciplinary education environments at Sciences Po and within Berkeley’s College of Letters & Science will help you to achieve your personal goals, both academic and professional. Please include specific examples that address the Sciences Po campus’s region of focus. Be sure to explain how you foresee your involvement in campus life outside of the classroom at both institutions.

The essay should be written in English.

Students must include a resume or CV as part of their application. This should include activities, organizations, skills, internships, and anything else done while in high school, outside of coursework.

Recommendation Letters (Optional)

Students can submit up to two (2) letters of recommendation for the Sciences Po supplemental application. Letters can be written in English or in French.

Recommendation letters can come from the following (we highly suggest at least one letter come from an academic source):

- Anyone else you deem appropriate

*If asked to submit letters of recommendation by UC Berkeley following application submission, please note that the letters submitted to the Dual Degree program are not forwarded to the UC Berkeley Admissions Office and must be submitted separately.

If invited, online interviews will take place between late January and early February.

Interviews include Dual Degree Program Coordinators and Sciences Po Campus Directors, Deans, and/or officials. Be prepared to answer questions about the campus, region, current events, and personal reasons for interest in the program.

Connect with us

Join our newsletter!

Apply to Sciences Po

Starting your online application? Please create an account.

Personal data (GDPR) Read the information about personal data processings and the conditions of use of the Platform . Data controller : Sciences Po (la Fondation Nationale des Sciences Politiques (FNSP) et l’IEP de Paris), Direction de la formation, Jury d’admission, MESRI; Data recipients : Sciences Po, jurys of Admission, the French Ministry of Higher Education, Research and Innovation (MESRI), Partner universities who offer with Sciences Po, double-degree programs. GDPR rights : To exercise data protection rights (access, rectification, erasure, object, restriction of processing, deciding the faith of data post-mortem) : 1/ Contact the Admissions Department [email protected] ; 2/ Contact the Sciences Po Data Protection Officer [email protected] ; 3/ Then, if needed further assistance about your GDPR rights, contact the French Data Protection Authority (CNIL).

ADMISSIONS 2021: A NEW PROCEDURE

Sciences Po’s new and "reformed" admissions procedure will open in autumn of 2020 for applications for the 2021/2022 academic year. All candidates, both French and international, will be evaluated in the same ways on identical and transparent criteria.

This plural and demanding assessment process is based on four separate evaluations: students are required to have an excellent academic record, confirmed in the results of their final secondary school exams. They should be able to present themselves and express their motivations in writing, and convince a jury in an interview.

Four equally weighted evaluations to identify the best talents

The first three evaluations of the admissions procedure are assembled in a single application that shows the full diversity of the candidate’s academic and extra-curricular skills. The application should demonstrate that the candidate is both knowledgeable and well-rounded. It consists of three marks out of 20:

- A mark out of 20 for the candidate’s results in the Baccalaureate exams or foreign equivalent

- A mark out of 20 for his or her academic performance in the last three years of secondary school: this takes into account all grades obtained over the three years, but also the student’s overall progress and feedback from teachers.

- A mark out of 20 for three written pieces: a personal statement in which the candidate describes his or her activities and areas of interest, another in which he or she defend his or her motivations and reasons for applying to Sciences Po, and an essay.

In order to proceed to the interview, the fourth and final evaluation of the admissions procedure, candidates must obtain a mark equal to or higher than a minimum mark out of 60 to be defined by the Sciences Po admissions jury each year.

The interview: a guided exercise to complete the evaluation process

The interview provides an important opportunity for the institution to meet the candidate. It gives a new perspective on the candidate, which is distinct from the other evaluations since the examiners are not given access to the application. Students will be assessed on their ability to engage in discussion, their attitude in response to questions, and the strength of their potential for success at Sciences Po.

The discussion will be conducted remotely. It will last 30 minutes and consist of three sequences:

- The candidate introduces him or herself

- The candidate is asked to choose from two images that he/she will comment on and analyse

- The candidate discusses his or her motivations with the examiners

After the interview, the four marks out 20 are added to give a final admission mark out of 80. For the candidate to be offered a place, this mark must meet or exceed a minimum admission mark set by the Sciences Po admissions jury each year.

At every stage of the admissions process, decisions are made by an experienced panel of evaluators, combining secondary school teachers and members of the teaching and academic staff at Sciences Po. This panel conducts a deep and detailed assessment of each application, without the input of any algorithm.

Secondary school students preparing to sit the French Baccalaureate must apply via the Parcoursup admissions platform, following the platform’s own deadlines. For international secondary school students who will obtain a foreign high school diploma, admissions will continue to be conducted through the Sciences Po admissions website, with results announced to candidates on a rolling basis.

- Applying via Parcoursup , following the French platform's deadlines

From mid-January to late March: applications open

During April/May: applications evaluated

May: interviews and admissions results

- Applying on the Sciences Po admissions website

From late November: applications open

Between November and June: evaluation of applications and interviews

Applications are assessed on a rolling basis and the timeline for announcing decisions varies depending on when the completed application is submitted and to which admissions jury it is allocated. On average, candidates receive their response between six and eight weeks after submitting their application.

More information: https://www.sciencespo.fr/admissions/en/undergraduate.html

An extension of Sciences Po’s policy of social inclusion and equal opportunities

Candidates from secondary schools participating in Sciences Po’s Equal Opportunity Programme (CEP) apply through the Parcoursup platform and pass the same evaluations as all other candidates, but are admitted through a specific admissions pathway. The application phase allows for their specific background as part of the CEP to be taken into account.

The number of secondary schools participating in the programme will increase from 106 to 200 by 2023, taking the proportion of CEP admissions from 10% to 15% of each new cohort. The aim is for scholarship holders to make up 30% of all incoming undergraduate classes.

Application costs have now been harmonised: all candidates will pay 150 euros to submit their application, with the exception of scholarship holders, who will be exempt. With interviews conducted remotely, candidates will not need to cover any transport costs.

LANCEMENT D’UNE CHAIRE D’ETUDES SUR LE FAIT RELIGIEUX

King’s College London et Sciences Po renforcent leur partenariat par...

Dans la meme catégorie, sciences po lance comprendre son temps, sa revue pour éclairer le débat public , lancement des "communs démocratiques" : une initiative de recherche française prend le lead mondial pour..., le programme care, un nouveau programme multi-universitaire pour former la future génération de décideurs engagés..., classement qs 2024 : sciences po devient la 2e meilleure universite mondiale en “politics”.

Sciences Po 2021 - tous droits réservés | powered by ePressPack - Mentions légales

MENTIONS LÉGALES

SOSciencesPo – Aide & Conseils

Le site des sciencespistes pour les futur.e.s sciencespistes !

Seventeen things to know before Sciences Po

Dernière mise à jour : ?

The original version of this article – by Cassandra Betts – has been published in The Sundial (Sciences Po’s campus of Reims).

Starting school at Sciences Po is scary. For many of us it is the first time living away from our parents and being in a foreign country. You not only have to worry about making the perfect first impression on your fellow students and creating the ideal schedule during IPs (which will always be terrifying, regardless of how many times you do it), but you also need to deal with dozens of other trivialities, like cooking for yourself, buying housing insurance and setting up a phone plan.

Despite all these struggles, I guarantee you, everything will get sorted out. Next year you’ll come back to Reims, happy to see those big red doors at the front of Sciences Po. I have to admit, they’ll always be a little intimidating, but they won’t be scary anymore. You’ll have done dozens of oral presentations, figured out how to survive midterms week, and be ready to take on a new year. You’ll always be learning new things, but here’s a list of things that, if I’d known them last year, maybe would have made my life a little easier.

1. Have high expectations, because this is an incredible place with incredible people and courses, but make sure they’re not too high. Once I realized that just because Sciences Po has a library that looks like it came straight out of Harry Potter doesn’t mean it’s perfect, I was able to appreciate its strengths and not tear it apart because of its weaknesses. Yes, it’s true that the administration is disorganized and frustrating, and that you may end up with a few teachers that you don’t like, but in the end, all the amazing teachers, friends and experiences that you have will make up for it.

2. Practically nothing is open on Sundays. If you’re from North America this can be quite shocking, so make sure you get all your toilet paper and chocolate bars on Saturday night.

3. You may not have met your best friends during orientation week, and that’s okay. I remember going to every bar night, brunch, club night and day in the park on edge because I was trying to figure out which classmates I would be spending my Friday nights with for the next two years. As it turns out, I didn’t meet the people who are now my closest friends until a few months into the semester.

4. Ask questions! Ask your TAs questions, ask the administration questions (although they may not answer you), ask the 2As questions, and ask your fellow 1As questions. Ultimately, we’re all going through the same thing, and anyone will be happy to answer your queries on Facebook or in person. Whether you want someone to look over your paper, give you advice on teachers, or tell you where the best kebab place is, never be afraid to ask.

5. Find a place to study that works for your personal learning style, and start creating good study habits from the get-go. The first few weeks at Science Po can be deceiving. The reading lists don’t yet seem bottomless and the deadlines for essays and oral presentations are still specks on the horizon. Use the free time to read ahead in classes, and to figure out if you learn better in the bustle of Oma’s coffee shop, the calm of the old library, or the privacy of your own room. When you have a deadline looming, it’s comforting to know that you already have a spot where you can focus and do your best work.

6. Carrefour will be your new happy place. It’s one of the few places that is actually open on Sundays (for a few hours at least) and the one on Rue Gambetta has everything a starving student needs to live comfortably. You can buy cutlery, plates, ice cream, sheets, pillows, blankets and any other necessities that are surprisingly difficult to track down in Reims. Also, on Thursdays the items in the baked goods section are ten percent off. What you choose to do with that little tidbit of information is up to you (I recommend buying a pie, but that’s just me).

7. Don’t feel obligated to go to every event that is being hosted on campus. Our BDE, BDA, and AS are awesome at planning events, and the number of conferences on campus is simply astounding. It is, however, physically impossible to go to every event, and you’ll just burn yourself out if you try to.

8. That being said, make sure you do attend events. All of the student groups do a fantastic job organizing conferences, and many compelling people come to speak to us. Try to attend a few events that are out of your comfort zone and you may find a new passion, or at least learn something interesting.

9. Schedule time to travel. Last year I spent all my time studying, or saying that I was going to study when I really spent the entire day lying on my bed eating ramen. As a Canadian, part of the big draw of Sciences Po was the opportunity to study in France. You need to balance the school part of your experience with the exploring part.

10. Visit your TAs and lecturers during their office hours. It can seem counterproductive to waste time visiting your TAs, especially if you don’t specifically need their help and have a million other things that need to be get done, but in the long run the relationships you build with them will be worth a lot.

11. Participate in extracurriculars. You have to join a club in order to get credits for your group project at the end of the year, but don’t just do it for the credits. If you like sports, join a sports team. If you love writing, join the Sundial. Some clubs can seem quite intimidating, as they have an application process in order to join. Don’t be scared to apply. If you don’t get the position you were hoping for that’s okay. You’ll find something equally exciting, and there are dozens of clubs that you don’t need to apply to. If you’re really passionate about a particular group that you weren’t admitted to, talk to the person in charge. You may be able to participate even if it’s not for credits.

12. Get to know your godparent. Sometimes they won’t be very good at planning a time to meet, so take the initiative. They’ll be a great resource, and it will be nice to know someone who’s not in your year. They can give you their old notes, tell you which TAs to pick, and they’ll be someone who you can bombard with questions without feeling too shy.

13. Make an effort to talk to people who are not from your country or your program. It’s comforting to spend time with people who speak your language and share your culture, but it’s also important to take advantage of the diversity on campus and get out of your comfort zone.

14. The Sciences Po method is not going to make or break your oral presentation. Orientation week may have stressed the two parts-two sub-parts format, but make sure you don’t get so caught up in structure that you forget about content. Some teachers don’t even require the Sciences Po method. Email your teacher your outline and work through how to best structure your presentation based on what you’re trying to say. It may take a few times to figure it out, but you’ll learn from the process and see a huge improvement by the end of the year

15. A few bad grades are not the end of the world. Last year I was crushed after bombing my first few assignments. I felt like I didn’t belong here and that I had made a terrible decision to come to Sciences Po, where everyone else had it together and knew so much more than me. Of course, in hindsight, it’s obvious that everyone was struggling as well, and that the quizzes and oral presentations that I had done poorly on were not representative of the entire semester. After talking with my teachers, I figured out what they were expecting and what I had to work on in order to meet their expectations.

16. Explore Reims. Reims may seem kind of sleepy, but if you take the time to read up on its past, it’s actually a really cool place, and you come to realize that almost everything around you has some historical significance. If the brown and grey of the city is kind of depressing you, head up to Parc de Champagne or cross to the other side of the canal. There’s some really nice trees, the water doesn’t look quite as murky, and I find it very uplifting just to see some greenery.

17. Have fun! This is definitely one of the most cliché pieces of advice ever given, but it’s so important just to take a break from everything and enjoy yourself. Whether it’s travelling to Paris for the day, staying in for a movie and pizza night on Friday, going to the bar, or playing a game of pick-up basketball, do things for yourself. School is important, and it will be gratifying to see all your hard work pays off, but balance is essential. Make sure you’re happy, because your two years will go by way too quickly!

Related links Learn more about the international admission procedure at Sciences Po Visit Sciences Po’s website

Sciences po motivation letter

Why do governments function the way they do? Obey the law or bypass it? Go to war or create peace? These are few of the questions I seek to explore and understand at Sciences Po. This interest of the world surrounding us is in my DNA and reflects my inquisitive nature. I always seek to deepen my understanding of how countries or groups interact with one another and the reasons they act the way they do.

Growing up, I have lived in Saudi Arabia, UAE, Switzerland, England, and Lebanon. These settings made me truly multicultural and international. I became versatile and adaptable to change. These qualities will give me an edge in transitioning smoothly into university life.

My interest in global affairs developed during my participation in Model United Nations. It was during these conferences that my enthusiasm for debate was born. Between rapid fire negotiations and propositions, I was able to put forth solid arguments and compromise to obtain the most effective solutions. I discussed current issues in the mindset of the world’s leaders and so was able to look at all aspects of a problem with a solution in mind. Nowadays, problems in our world are interlinked across many fields. Sciences Po’s multidisciplinary program will enable me to master several subjects in order to contribute to finding proper solutions.

Writing and editing for my school newspaper enabled me to exercise my passion for writing and gave me a feel of what being a journalist might entail later on. In this position, I was trained to work efficiently and meet deadlines, a vital skill for any workplace. However, I was truly rewarded in that I was able to control a large part of what went into the paper and how it would be written. I would almost directly channel what our viewers would read. Bringing the students together in this way and revealing overlooked issues gave me the most satisfaction and showed me how exciting the possibilities in the real world could be.

What really impresses me about Sciences Po is its focus on maintaining an international environment. Because I aspire to work in a multinational newspaper with people from all kinds of backgrounds, it has become a crucial objective of mine to seek this in my university life.

In addition, Sciences Po’s small classroom sizes appeal to me as they make for more interactive lessons involving debates and discussions. I also like the critical thinking approach Sciences Po has towards the courses. I believe training our minds to think independently and creatively will allow us to deal with issues in our jobs and life more effectively.

With the high quality of education offered to me at Sciences Po, I will have exceptional opportunities to study journalism at the world’s most respected universities Sciences Po partners with. I will be able to experience a completely different side of my topic in another country during my third year and then during my master’s degree.

I am excited by the vibrant student life at your university and plan to continue my active lifestyle. I hope to write for your school paper, The Sundial, join your debate team, and take yoga classes, as I am an avid student, practicing four times a week.

Throughout my travels, I discovered that language was the only way to effectively communicate with people. It is through Arabic, for instance, that I was able to connect with Lebanese people and embrace the culture in a way that I couldn’t have done through English. I made it a point to learn as many languages as I could. I completed the CNED French course independently and became fluent in the language, and took several Spanish courses.

Therefore, it is with great enthusiasm and interest that I look forward to study in France, where I can further master my French. France is unique in its own culture; after all it isn’t the world’s top tourist destination without reason. Not only is its central position in Europe appealing, but its history, art, museums and food all call for discovery.

My first choice is the Reims campus. At Reims, I will be studying two of the largest political influences on the world. It will be interesting to see how these regions work together to solve world issues. Now that problems are increasingly widespread and cross borders, Europe and North America must work hand in hand. Furthermore, the Reims campus makes it possible for me to study in France in the first place, as its program is in English.

I am also interested in the Dijon campus. As there are considerable amounts of conflict in Eastern Europe, there is a lot of potential to work on in the region. It contains emerging economies and numerous resources, indicating it will play an important role in the future.

I want to create my network at your university made of ambitious thinkers who, like me, want to make an impact. I want to share ideas with them and explore how we could formulate the future. At Sciences Po, I hope to acquire an experience that will shape me into the driven, successful journalist I later want to become and to help me achieve my goals for change, all while bringing something different to the table.

Just a warning: You should not post your essays on the forums where everyone can see them, as (sadly) some people may plagiarize and whatnot. You should instead PM people who agree to read your essay and provide feedback.

@sarahjk Hey there! Nice to see someone else applying to Sci-Po too. Im applying for Le Havre campus. u got ur interview yet?

I have made a number of editorial changes/suggestions. I think the last metaphor or bringing something to the table falls flat, but, in general, great job!! (and don’t post essays online!!)

Why do governments function the way they do? Obey laws or bypass them? Make war or create peace? These are few of the questions I seek to explore at Sciences Po. Having an interest of the world around us is in my DNA and is heightened by my inquisitive nature. I always seek to deepen my understanding of how countries or groups interact with one another and the reasons they act the way they do.

Growing up, I lived in Saudi Arabia, UAE, Switzerland, England, and Lebanon. Having these experiences have made me truly multicultural and international. At the same time, I became versatile and adaptable to change. I believe that hese qualities will give me an edge in transitioning smoothly into university life.

My interest in global affairs developed as a result of being an active participant in my schools’ Model United Nations. It was during these conferences that my enthusiasm for debate was born. Between rapid fire negotiations and propositions, I was able to put forth solid arguments and seek compromises to obtain the most effective solutions. I discussed current issues in the mindset of the world’s leaders and so was able to look at all aspects of a problem with a solution in mind. In today’s world, our problems – and their solutions - require an interdisciplinary focus. Sciences Po’s multidisciplinary program will enable me to master several subjects in seeking to find proper solutions to problems we face.

My work as a writer and editor for my school newspaper has enabled me to exercise my passion for writing and gave me a feel of what being a journalist might entail. In this position, have to work efficiently to meet deadlines, a vital skill for any workplace. Moreover, I was truly rewarded in that I was able to control a large part of what went into the paper and how it would be written. Bringing the students together and revealing overlooked issues gave me a great deal of satisfaction and showed me how exciting the possibilities in the real world could be.

What really impresses me about Sciences Po is its focus on maintaining an international environment. Because I aspire to work in a multinational newspaper with people from many different backgrounds, this is something that I seek to accomplish in my university life.

In addition, Sciences Po’s small classroom sizes appeal to me as they make for more interactive lessons involving debates and discussions, and I believe in the approach involving critical thought that Sciences Po has in its courses. I believe training our minds to think independently and creatively will allow us to deal with issues in our jobs and life more effectively.

With the high quality of education offered to me at Sciences Po, I will have exceptional opportunities to study journalism at the world’s most respected universities which which Sciences Po has partnerships. I will be able to experience a completely different viewpoint with respect to my topic through studying in another country during my third year and again when working towards my master’s degree.

I am excited by the vibrant student life at your university and plan to continue my active lifestyle. I hope to write for your school paper, The Sundial, join your debate team, and continue to take yoga classes, which I currently do several times a week.

Throughout my travels, I discovered that language was the only way to effectively communicate with people. It is through Arabic, for instance, that I was able to connect with Lebanese people and embrace the culture in a way that I couldn’t have done if I only spoke English. I made it a point to learn as many languages as I could. I completed the CNED French course independently and became fluent in the language, and I have taken several Spanish courses as well.

Therefore, it is with great enthusiasm and interest that I look forward to study in France, where I can continue my mastery of French. France has a unique, well-defined and well-respected culture; after all it isn’t the world’s top tourist destination without reason. Not only is its central position in Europe of great interest to me, but its history, art, museums and food are all things that I am anxious to explore

My first choice is the Reims campus. At Reims, I will be studying two of the largest political influences on the world (WHICH ONES?). It will be interesting to see how these regions work together to solve world issues. Now that problems are increasingly widespread and cross borders, Europe and North America must work hand in hand in solving problems. Furthermore, the Reims campus makes it possible for me to study in France in the first place, as its program is in English.

I want to create my network at your university made of ambitious thinkers who, like me, want to make an impact. I want to share ideas with them and explore how we could formulate the future. At Sciences Po, I hope to acquire an experience that will shape me into the driven, successful journalist I want to become and to help me achieve my goals for change, all while bringing something different to the table.

Applying to Medical School with AMCAS®

New section.

The American Medical College Application Service® (AMCAS®) is the AAMC's centralized medical school application processing service. Most U.S. medical schools use the AMCAS program as the primary application method for their first-year entering classes.

- AMCAS® Sign In

The AMCAS applicant guide outlines the current AMCAS application process, policies, and procedures. This comprehensive resource helps you understand how to complete your AMCAS application.

The American Medical College Application Service® (AMCAS®) is the AAMC's centralized medical school application processing service.

These pages outline the sections of the AMCAS® application, including the Choose Your Medical School Tool. Full details can be found in the AMCAS Applicant Guide . Visit the FAQ page for answers to your questions.

Use the AAMC American Medical College Application Service® (AMCAS®) Medical Schools and Deadlines search tool to find application deadlines at participating regular MD programs.

Frequently asked questions (FAQs) regarding the American Medical College Application Service® (AMCAS®) application process. For more detailed FAQs on the AMCAS Letter of Evaluation process including information for letter authors please visit the AMCAS How to Apply section of the site.

The AAMC American Medical College Application Service® (AMCAS®) resources, tools, and tutorials for premed students preparing to apply to medical schools.

The American Medical College Application Service® (AMCAS®) application policies are established protocols for applicants and admission officers.

Send us a message .

Monday-Friday, 9 a.m.-7 p.m. ET Closed Wednesday, 3-5 p.m. ET

The 2025 AMCAS application is now open . If you wish to start medical school in Fall 2025, please complete and submit the 2025 AMCAS application.

As of June 7 AMCAS is:

Marking transcripts as "Received" that were delivered on or before:

Paper (mailed) – June 6

Parchment – June 5

National Student Clearinghouse – June 7

Processing applications that reached "Ready for Review" on May 28.

Processing Academic Change Requests submitted on June 6.

Outline of the current AMCAS application process, policies, and procedures.

This resource is designed to help you prepare your materials for the AMCAS ® application but does not replace the online application.

The application processing fee is $175 and includes one medical school designation. Additional school designations are $46 each. Tax, where applicable, will be calculated at checkout.

If approved for the Fee Assistance Program, you will receive a waiver for all AMCAS fees for one (1) application submission with up to 20 medical school designations ($1,030 value). Benefits are not retroactive.

Thank you for visiting nature.com. You are using a browser version with limited support for CSS. To obtain the best experience, we recommend you use a more up to date browser (or turn off compatibility mode in Internet Explorer). In the meantime, to ensure continued support, we are displaying the site without styles and JavaScript.

- View all journals

- Explore content

- About the journal

- Publish with us

- Sign up for alerts

- Open access

- Published: 03 June 2024

Applying large language models for automated essay scoring for non-native Japanese

- Wenchao Li 1 &

- Haitao Liu 2

Humanities and Social Sciences Communications volume 11 , Article number: 723 ( 2024 ) Cite this article

254 Accesses

2 Altmetric

Metrics details

- Language and linguistics

Recent advancements in artificial intelligence (AI) have led to an increased use of large language models (LLMs) for language assessment tasks such as automated essay scoring (AES), automated listening tests, and automated oral proficiency assessments. The application of LLMs for AES in the context of non-native Japanese, however, remains limited. This study explores the potential of LLM-based AES by comparing the efficiency of different models, i.e. two conventional machine training technology-based methods (Jess and JWriter), two LLMs (GPT and BERT), and one Japanese local LLM (Open-Calm large model). To conduct the evaluation, a dataset consisting of 1400 story-writing scripts authored by learners with 12 different first languages was used. Statistical analysis revealed that GPT-4 outperforms Jess and JWriter, BERT, and the Japanese language-specific trained Open-Calm large model in terms of annotation accuracy and predicting learning levels. Furthermore, by comparing 18 different models that utilize various prompts, the study emphasized the significance of prompts in achieving accurate and reliable evaluations using LLMs.

Similar content being viewed by others

Accurate structure prediction of biomolecular interactions with AlphaFold 3

Scaling neural machine translation to 200 languages

Highly accurate protein structure prediction with AlphaFold

Conventional machine learning technology in aes.

AES has experienced significant growth with the advancement of machine learning technologies in recent decades. In the earlier stages of AES development, conventional machine learning-based approaches were commonly used. These approaches involved the following procedures: a) feeding the machine with a dataset. In this step, a dataset of essays is provided to the machine learning system. The dataset serves as the basis for training the model and establishing patterns and correlations between linguistic features and human ratings. b) the machine learning model is trained using linguistic features that best represent human ratings and can effectively discriminate learners’ writing proficiency. These features include lexical richness (Lu, 2012 ; Kyle and Crossley, 2015 ; Kyle et al. 2021 ), syntactic complexity (Lu, 2010 ; Liu, 2008 ), text cohesion (Crossley and McNamara, 2016 ), and among others. Conventional machine learning approaches in AES require human intervention, such as manual correction and annotation of essays. This human involvement was necessary to create a labeled dataset for training the model. Several AES systems have been developed using conventional machine learning technologies. These include the Intelligent Essay Assessor (Landauer et al. 2003 ), the e-rater engine by Educational Testing Service (Attali and Burstein, 2006 ; Burstein, 2003 ), MyAccess with the InterlliMetric scoring engine by Vantage Learning (Elliot, 2003 ), and the Bayesian Essay Test Scoring system (Rudner and Liang, 2002 ). These systems have played a significant role in automating the essay scoring process and providing quick and consistent feedback to learners. However, as touched upon earlier, conventional machine learning approaches rely on predetermined linguistic features and often require manual intervention, making them less flexible and potentially limiting their generalizability to different contexts.

In the context of the Japanese language, conventional machine learning-incorporated AES tools include Jess (Ishioka and Kameda, 2006 ) and JWriter (Lee and Hasebe, 2017 ). Jess assesses essays by deducting points from the perfect score, utilizing the Mainichi Daily News newspaper as a database. The evaluation criteria employed by Jess encompass various aspects, such as rhetorical elements (e.g., reading comprehension, vocabulary diversity, percentage of complex words, and percentage of passive sentences), organizational structures (e.g., forward and reverse connection structures), and content analysis (e.g., latent semantic indexing). JWriter employs linear regression analysis to assign weights to various measurement indices, such as average sentence length and total number of characters. These weights are then combined to derive the overall score. A pilot study involving the Jess model was conducted on 1320 essays at different proficiency levels, including primary, intermediate, and advanced. However, the results indicated that the Jess model failed to significantly distinguish between these essay levels. Out of the 16 measures used, four measures, namely median sentence length, median clause length, median number of phrases, and maximum number of phrases, did not show statistically significant differences between the levels. Additionally, two measures exhibited between-level differences but lacked linear progression: the number of attributives declined words and the Kanji/kana ratio. On the other hand, the remaining measures, including maximum sentence length, maximum clause length, number of attributive conjugated words, maximum number of consecutive infinitive forms, maximum number of conjunctive-particle clauses, k characteristic value, percentage of big words, and percentage of passive sentences, demonstrated statistically significant between-level differences and displayed linear progression.

Both Jess and JWriter exhibit notable limitations, including the manual selection of feature parameters and weights, which can introduce biases into the scoring process. The reliance on human annotators to label non-native language essays also introduces potential noise and variability in the scoring. Furthermore, an important concern is the possibility of system manipulation and cheating by learners who are aware of the regression equation utilized by the models (Hirao et al. 2020 ). These limitations emphasize the need for further advancements in AES systems to address these challenges.

Deep learning technology in AES

Deep learning has emerged as one of the approaches for improving the accuracy and effectiveness of AES. Deep learning-based AES methods utilize artificial neural networks that mimic the human brain’s functioning through layered algorithms and computational units. Unlike conventional machine learning, deep learning autonomously learns from the environment and past errors without human intervention. This enables deep learning models to establish nonlinear correlations, resulting in higher accuracy. Recent advancements in deep learning have led to the development of transformers, which are particularly effective in learning text representations. Noteworthy examples include bidirectional encoder representations from transformers (BERT) (Devlin et al. 2019 ) and the generative pretrained transformer (GPT) (OpenAI).

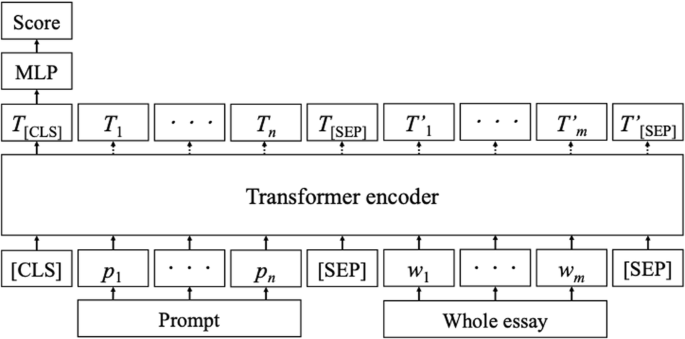

BERT is a linguistic representation model that utilizes a transformer architecture and is trained on two tasks: masked linguistic modeling and next-sentence prediction (Hirao et al. 2020 ; Vaswani et al. 2017 ). In the context of AES, BERT follows specific procedures, as illustrated in Fig. 1 : (a) the tokenized prompts and essays are taken as input; (b) special tokens, such as [CLS] and [SEP], are added to mark the beginning and separation of prompts and essays; (c) the transformer encoder processes the prompt and essay sequences, resulting in hidden layer sequences; (d) the hidden layers corresponding to the [CLS] tokens (T[CLS]) represent distributed representations of the prompts and essays; and (e) a multilayer perceptron uses these distributed representations as input to obtain the final score (Hirao et al. 2020 ).

AES system with BERT (Hirao et al. 2020 ).

The training of BERT using a substantial amount of sentence data through the Masked Language Model (MLM) allows it to capture contextual information within the hidden layers. Consequently, BERT is expected to be capable of identifying artificial essays as invalid and assigning them lower scores (Mizumoto and Eguchi, 2023 ). In the context of AES for nonnative Japanese learners, Hirao et al. ( 2020 ) combined the long short-term memory (LSTM) model proposed by Hochreiter and Schmidhuber ( 1997 ) with BERT to develop a tailored automated Essay Scoring System. The findings of their study revealed that the BERT model outperformed both the conventional machine learning approach utilizing character-type features such as “kanji” and “hiragana”, as well as the standalone LSTM model. Takeuchi et al. ( 2021 ) presented an approach to Japanese AES that eliminates the requirement for pre-scored essays by relying solely on reference texts or a model answer for the essay task. They investigated multiple similarity evaluation methods, including frequency of morphemes, idf values calculated on Wikipedia, LSI, LDA, word-embedding vectors, and document vectors produced by BERT. The experimental findings revealed that the method utilizing the frequency of morphemes with idf values exhibited the strongest correlation with human-annotated scores across different essay tasks. The utilization of BERT in AES encounters several limitations. Firstly, essays often exceed the model’s maximum length limit. Second, only score labels are available for training, which restricts access to additional information.

Mizumoto and Eguchi ( 2023 ) were pioneers in employing the GPT model for AES in non-native English writing. Their study focused on evaluating the accuracy and reliability of AES using the GPT-3 text-davinci-003 model, analyzing a dataset of 12,100 essays from the corpus of nonnative written English (TOEFL11). The findings indicated that AES utilizing the GPT-3 model exhibited a certain degree of accuracy and reliability. They suggest that GPT-3-based AES systems hold the potential to provide support for human ratings. However, applying GPT model to AES presents a unique natural language processing (NLP) task that involves considerations such as nonnative language proficiency, the influence of the learner’s first language on the output in the target language, and identifying linguistic features that best indicate writing quality in a specific language. These linguistic features may differ morphologically or syntactically from those present in the learners’ first language, as observed in (1)–(3).

我-送了-他-一本-书

Wǒ-sòngle-tā-yī běn-shū

1 sg .-give. past- him-one .cl- book

“I gave him a book.”

Agglutinative

彼-に-本-を-あげ-まし-た

Kare-ni-hon-o-age-mashi-ta

3 sg .- dat -hon- acc- give.honorification. past

Inflectional

give, give-s, gave, given, giving

Additionally, the morphological agglutination and subject-object-verb (SOV) order in Japanese, along with its idiomatic expressions, pose additional challenges for applying language models in AES tasks (4).

足-が 棒-に なり-ました

Ashi-ga bo-ni nar-mashita

leg- nom stick- dat become- past

“My leg became like a stick (I am extremely tired).”

The example sentence provided demonstrates the morpho-syntactic structure of Japanese and the presence of an idiomatic expression. In this sentence, the verb “なる” (naru), meaning “to become”, appears at the end of the sentence. The verb stem “なり” (nari) is attached with morphemes indicating honorification (“ます” - mashu) and tense (“た” - ta), showcasing agglutination. While the sentence can be literally translated as “my leg became like a stick”, it carries an idiomatic interpretation that implies “I am extremely tired”.

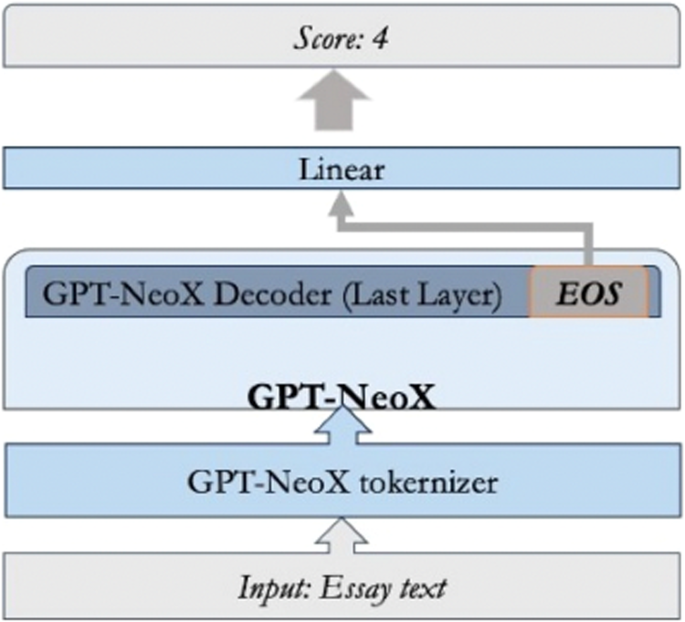

To overcome this issue, CyberAgent Inc. ( 2023 ) has developed the Open-Calm series of language models specifically designed for Japanese. Open-Calm consists of pre-trained models available in various sizes, such as Small, Medium, Large, and 7b. Figure 2 depicts the fundamental structure of the Open-Calm model. A key feature of this architecture is the incorporation of the Lora Adapter and GPT-NeoX frameworks, which can enhance its language processing capabilities.

GPT-NeoX Model Architecture (Okgetheng and Takeuchi 2024 ).

In a recent study conducted by Okgetheng and Takeuchi ( 2024 ), they assessed the efficacy of Open-Calm language models in grading Japanese essays. The research utilized a dataset of approximately 300 essays, which were annotated by native Japanese educators. The findings of the study demonstrate the considerable potential of Open-Calm language models in automated Japanese essay scoring. Specifically, among the Open-Calm family, the Open-Calm Large model (referred to as OCLL) exhibited the highest performance. However, it is important to note that, as of the current date, the Open-Calm Large model does not offer public access to its server. Consequently, users are required to independently deploy and operate the environment for OCLL. In order to utilize OCLL, users must have a PC equipped with an NVIDIA GeForce RTX 3060 (8 or 12 GB VRAM).

In summary, while the potential of LLMs in automated scoring of nonnative Japanese essays has been demonstrated in two studies—BERT-driven AES (Hirao et al. 2020 ) and OCLL-based AES (Okgetheng and Takeuchi, 2024 )—the number of research efforts in this area remains limited.

Another significant challenge in applying LLMs to AES lies in prompt engineering and ensuring its reliability and effectiveness (Brown et al. 2020 ; Rae et al. 2021 ; Zhang et al. 2021 ). Various prompting strategies have been proposed, such as the zero-shot chain of thought (CoT) approach (Kojima et al. 2022 ), which involves manually crafting diverse and effective examples. However, manual efforts can lead to mistakes. To address this, Zhang et al. ( 2021 ) introduced an automatic CoT prompting method called Auto-CoT, which demonstrates matching or superior performance compared to the CoT paradigm. Another prompt framework is trees of thoughts, enabling a model to self-evaluate its progress at intermediate stages of problem-solving through deliberate reasoning (Yao et al. 2023 ).

Beyond linguistic studies, there has been a noticeable increase in the number of foreign workers in Japan and Japanese learners worldwide (Ministry of Health, Labor, and Welfare of Japan, 2022 ; Japan Foundation, 2021 ). However, existing assessment methods, such as the Japanese Language Proficiency Test (JLPT), J-CAT, and TTBJ Footnote 1 , primarily focus on reading, listening, vocabulary, and grammar skills, neglecting the evaluation of writing proficiency. As the number of workers and language learners continues to grow, there is a rising demand for an efficient AES system that can reduce costs and time for raters and be utilized for employment, examinations, and self-study purposes.

This study aims to explore the potential of LLM-based AES by comparing the effectiveness of five models: two LLMs (GPT Footnote 2 and BERT), one Japanese local LLM (OCLL), and two conventional machine learning-based methods (linguistic feature-based scoring tools - Jess and JWriter).

The research questions addressed in this study are as follows:

To what extent do the LLM-driven AES and linguistic feature-based AES, when used as automated tools to support human rating, accurately reflect test takers’ actual performance?

What influence does the prompt have on the accuracy and performance of LLM-based AES methods?

The subsequent sections of the manuscript cover the methodology, including the assessment measures for nonnative Japanese writing proficiency, criteria for prompts, and the dataset. The evaluation section focuses on the analysis of annotations and rating scores generated by LLM-driven and linguistic feature-based AES methods.

Methodology

The dataset utilized in this study was obtained from the International Corpus of Japanese as a Second Language (I-JAS) Footnote 3 . This corpus consisted of 1000 participants who represented 12 different first languages. For the study, the participants were given a story-writing task on a personal computer. They were required to write two stories based on the 4-panel illustrations titled “Picnic” and “The key” (see Appendix A). Background information for the participants was provided by the corpus, including their Japanese language proficiency levels assessed through two online tests: J-CAT and SPOT. These tests evaluated their reading, listening, vocabulary, and grammar abilities. The learners’ proficiency levels were categorized into six levels aligned with the Common European Framework of Reference for Languages (CEFR) and the Reference Framework for Japanese Language Education (RFJLE): A1, A2, B1, B2, C1, and C2. According to Lee et al. ( 2015 ), there is a high level of agreement (r = 0.86) between the J-CAT and SPOT assessments, indicating that the proficiency certifications provided by J-CAT are consistent with those of SPOT. However, it is important to note that the scores of J-CAT and SPOT do not have a one-to-one correspondence. In this study, the J-CAT scores were used as a benchmark to differentiate learners of different proficiency levels. A total of 1400 essays were utilized, representing the beginner (aligned with A1), A2, B1, B2, C1, and C2 levels based on the J-CAT scores. Table 1 provides information about the learners’ proficiency levels and their corresponding J-CAT and SPOT scores.

A dataset comprising a total of 1400 essays from the story writing tasks was collected. Among these, 714 essays were utilized to evaluate the reliability of the LLM-based AES method, while the remaining 686 essays were designated as development data to assess the LLM-based AES’s capability to distinguish participants with varying proficiency levels. The GPT 4 API was used in this study. A detailed explanation of the prompt-assessment criteria is provided in Section Prompt . All essays were sent to the model for measurement and scoring.

Measures of writing proficiency for nonnative Japanese

Japanese exhibits a morphologically agglutinative structure where morphemes are attached to the word stem to convey grammatical functions such as tense, aspect, voice, and honorifics, e.g. (5).

食べ-させ-られ-まし-た-か

tabe-sase-rare-mashi-ta-ka

[eat (stem)-causative-passive voice-honorification-tense. past-question marker]

Japanese employs nine case particles to indicate grammatical functions: the nominative case particle が (ga), the accusative case particle を (o), the genitive case particle の (no), the dative case particle に (ni), the locative/instrumental case particle で (de), the ablative case particle から (kara), the directional case particle へ (e), and the comitative case particle と (to). The agglutinative nature of the language, combined with the case particle system, provides an efficient means of distinguishing between active and passive voice, either through morphemes or case particles, e.g. 食べる taberu “eat concusive . ” (active voice); 食べられる taberareru “eat concusive . ” (passive voice). In the active voice, “パン を 食べる” (pan o taberu) translates to “to eat bread”. On the other hand, in the passive voice, it becomes “パン が 食べられた” (pan ga taberareta), which means “(the) bread was eaten”. Additionally, it is important to note that different conjugations of the same lemma are considered as one type in order to ensure a comprehensive assessment of the language features. For example, e.g., 食べる taberu “eat concusive . ”; 食べている tabeteiru “eat progress .”; 食べた tabeta “eat past . ” as one type.

To incorporate these features, previous research (Suzuki, 1999 ; Watanabe et al. 1988 ; Ishioka, 2001 ; Ishioka and Kameda, 2006 ; Hirao et al. 2020 ) has identified complexity, fluency, and accuracy as crucial factors for evaluating writing quality. These criteria are assessed through various aspects, including lexical richness (lexical density, diversity, and sophistication), syntactic complexity, and cohesion (Kyle et al. 2021 ; Mizumoto and Eguchi, 2023 ; Ure, 1971 ; Halliday, 1985 ; Barkaoui and Hadidi, 2020 ; Zenker and Kyle, 2021 ; Kim et al. 2018 ; Lu, 2017 ; Ortega, 2015 ). Therefore, this study proposes five scoring categories: lexical richness, syntactic complexity, cohesion, content elaboration, and grammatical accuracy. A total of 16 measures were employed to capture these categories. The calculation process and specific details of these measures can be found in Table 2 .

T-unit, first introduced by Hunt ( 1966 ), is a measure used for evaluating speech and composition. It serves as an indicator of syntactic development and represents the shortest units into which a piece of discourse can be divided without leaving any sentence fragments. In the context of Japanese language assessment, Sakoda and Hosoi ( 2020 ) utilized T-unit as the basic unit to assess the accuracy and complexity of Japanese learners’ speaking and storytelling. The calculation of T-units in Japanese follows the following principles:

A single main clause constitutes 1 T-unit, regardless of the presence or absence of dependent clauses, e.g. (6).

ケンとマリはピクニックに行きました (main clause): 1 T-unit.

If a sentence contains a main clause along with subclauses, each subclause is considered part of the same T-unit, e.g. (7).

天気が良かった の で (subclause)、ケンとマリはピクニックに行きました (main clause): 1 T-unit.

In the case of coordinate clauses, where multiple clauses are connected, each coordinated clause is counted separately. Thus, a sentence with coordinate clauses may have 2 T-units or more, e.g. (8).

ケンは地図で場所を探して (coordinate clause)、マリはサンドイッチを作りました (coordinate clause): 2 T-units.

Lexical diversity refers to the range of words used within a text (Engber, 1995 ; Kyle et al. 2021 ) and is considered a useful measure of the breadth of vocabulary in L n production (Jarvis, 2013a , 2013b ).

The type/token ratio (TTR) is widely recognized as a straightforward measure for calculating lexical diversity and has been employed in numerous studies. These studies have demonstrated a strong correlation between TTR and other methods of measuring lexical diversity (e.g., Bentz et al. 2016 ; Čech and Miroslav, 2018 ; Çöltekin and Taraka, 2018 ). TTR is computed by considering both the number of unique words (types) and the total number of words (tokens) in a given text. Given that the length of learners’ writing texts can vary, this study employs the moving average type-token ratio (MATTR) to mitigate the influence of text length. MATTR is calculated using a 50-word moving window. Initially, a TTR is determined for words 1–50 in an essay, followed by words 2–51, 3–52, and so on until the end of the essay is reached (Díez-Ortega and Kyle, 2023 ). The final MATTR scores were obtained by averaging the TTR scores for all 50-word windows. The following formula was employed to derive MATTR:

\({\rm{MATTR}}({\rm{W}})=\frac{{\sum }_{{\rm{i}}=1}^{{\rm{N}}-{\rm{W}}+1}{{\rm{F}}}_{{\rm{i}}}}{{\rm{W}}({\rm{N}}-{\rm{W}}+1)}\)

Here, N refers to the number of tokens in the corpus. W is the randomly selected token size (W < N). \({F}_{i}\) is the number of types in each window. The \({\rm{MATTR}}({\rm{W}})\) is the mean of a series of type-token ratios (TTRs) based on the word form for all windows. It is expected that individuals with higher language proficiency will produce texts with greater lexical diversity, as indicated by higher MATTR scores.

Lexical density was captured by the ratio of the number of lexical words to the total number of words (Lu, 2012 ). Lexical sophistication refers to the utilization of advanced vocabulary, often evaluated through word frequency indices (Crossley et al. 2013 ; Haberman, 2008 ; Kyle and Crossley, 2015 ; Laufer and Nation, 1995 ; Lu, 2012 ; Read, 2000 ). In line of writing, lexical sophistication can be interpreted as vocabulary breadth, which entails the appropriate usage of vocabulary items across various lexicon-grammatical contexts and registers (Garner et al. 2019 ; Kim et al. 2018 ; Kyle et al. 2018 ). In Japanese specifically, words are considered lexically sophisticated if they are not included in the “Japanese Education Vocabulary List Ver 1.0”. Footnote 4 Consequently, lexical sophistication was calculated by determining the number of sophisticated word types relative to the total number of words per essay. Furthermore, it has been suggested that, in Japanese writing, sentences should ideally have a length of no more than 40 to 50 characters, as this promotes readability. Therefore, the median and maximum sentence length can be considered as useful indices for assessment (Ishioka and Kameda, 2006 ).

Syntactic complexity was assessed based on several measures, including the mean length of clauses, verb phrases per T-unit, clauses per T-unit, dependent clauses per T-unit, complex nominals per clause, adverbial clauses per clause, coordinate phrases per clause, and mean dependency distance (MDD). The MDD reflects the distance between the governor and dependent positions in a sentence. A larger dependency distance indicates a higher cognitive load and greater complexity in syntactic processing (Liu, 2008 ; Liu et al. 2017 ). The MDD has been established as an efficient metric for measuring syntactic complexity (Jiang, Quyang, and Liu, 2019 ; Li and Yan, 2021 ). To calculate the MDD, the position numbers of the governor and dependent are subtracted, assuming that words in a sentence are assigned in a linear order, such as W1 … Wi … Wn. In any dependency relationship between words Wa and Wb, Wa is the governor and Wb is the dependent. The MDD of the entire sentence was obtained by taking the absolute value of governor – dependent:

MDD = \(\frac{1}{n}{\sum }_{i=1}^{n}|{\rm{D}}{{\rm{D}}}_{i}|\)

In this formula, \(n\) represents the number of words in the sentence, and \({DD}i\) is the dependency distance of the \({i}^{{th}}\) dependency relationship of a sentence. Building on this, the annotation of sentence ‘Mary-ga-John-ni-keshigomu-o-watashita was [Mary- top -John- dat -eraser- acc -give- past] ’. The sentence’s MDD would be 2. Table 3 provides the CSV file as a prompt for GPT 4.

Cohesion (semantic similarity) and content elaboration aim to capture the ideas presented in test taker’s essays. Cohesion was assessed using three measures: Synonym overlap/paragraph (topic), Synonym overlap/paragraph (keywords), and word2vec cosine similarity. Content elaboration and development were measured as the number of metadiscourse markers (type)/number of words. To capture content closely, this study proposed a novel-distance based representation, by encoding the cosine distance between the essay (by learner) and essay task’s (topic and keyword) i -vectors. The learner’s essay is decoded into a word sequence, and aligned to the essay task’ topic and keyword for log-likelihood measurement. The cosine distance reveals the content elaboration score in the leaners’ essay. The mathematical equation of cosine similarity between target-reference vectors is shown in (11), assuming there are i essays and ( L i , …. L n ) and ( N i , …. N n ) are the vectors representing the learner and task’s topic and keyword respectively. The content elaboration distance between L i and N i was calculated as follows:

\(\cos \left(\theta \right)=\frac{{\rm{L}}\,\cdot\, {\rm{N}}}{\left|{\rm{L}}\right|{\rm{|N|}}}=\frac{\mathop{\sum }\nolimits_{i=1}^{n}{L}_{i}{N}_{i}}{\sqrt{\mathop{\sum }\nolimits_{i=1}^{n}{L}_{i}^{2}}\sqrt{\mathop{\sum }\nolimits_{i=1}^{n}{N}_{i}^{2}}}\)

A high similarity value indicates a low difference between the two recognition outcomes, which in turn suggests a high level of proficiency in content elaboration.

To evaluate the effectiveness of the proposed measures in distinguishing different proficiency levels among nonnative Japanese speakers’ writing, we conducted a multi-faceted Rasch measurement analysis (Linacre, 1994 ). This approach applies measurement models to thoroughly analyze various factors that can influence test outcomes, including test takers’ proficiency, item difficulty, and rater severity, among others. The underlying principles and functionality of multi-faceted Rasch measurement are illustrated in (12).

\(\log \left(\frac{{P}_{{nijk}}}{{P}_{{nij}(k-1)}}\right)={B}_{n}-{D}_{i}-{C}_{j}-{F}_{k}\)

(12) defines the logarithmic transformation of the probability ratio ( P nijk /P nij(k-1) )) as a function of multiple parameters. Here, n represents the test taker, i denotes a writing proficiency measure, j corresponds to the human rater, and k represents the proficiency score. The parameter B n signifies the proficiency level of test taker n (where n ranges from 1 to N). D j represents the difficulty parameter of test item i (where i ranges from 1 to L), while C j represents the severity of rater j (where j ranges from 1 to J). Additionally, F k represents the step difficulty for a test taker to move from score ‘k-1’ to k . P nijk refers to the probability of rater j assigning score k to test taker n for test item i . P nij(k-1) represents the likelihood of test taker n being assigned score ‘k-1’ by rater j for test item i . Each facet within the test is treated as an independent parameter and estimated within the same reference framework. To evaluate the consistency of scores obtained through both human and computer analysis, we utilized the Infit mean-square statistic. This statistic is a chi-square measure divided by the degrees of freedom and is weighted with information. It demonstrates higher sensitivity to unexpected patterns in responses to items near a person’s proficiency level (Linacre, 2002 ). Fit statistics are assessed based on predefined thresholds for acceptable fit. For the Infit MNSQ, which has a mean of 1.00, different thresholds have been suggested. Some propose stricter thresholds ranging from 0.7 to 1.3 (Bond et al. 2021 ), while others suggest more lenient thresholds ranging from 0.5 to 1.5 (Eckes, 2009 ). In this study, we adopted the criterion of 0.70–1.30 for the Infit MNSQ.

Moving forward, we can now proceed to assess the effectiveness of the 16 proposed measures based on five criteria for accurately distinguishing various levels of writing proficiency among non-native Japanese speakers. To conduct this evaluation, we utilized the development dataset from the I-JAS corpus, as described in Section Dataset . Table 4 provides a measurement report that presents the performance details of the 14 metrics under consideration. The measure separation was found to be 4.02, indicating a clear differentiation among the measures. The reliability index for the measure separation was 0.891, suggesting consistency in the measurement. Similarly, the person separation reliability index was 0.802, indicating the accuracy of the assessment in distinguishing between individuals. All 16 measures demonstrated Infit mean squares within a reasonable range, ranging from 0.76 to 1.28. The Synonym overlap/paragraph (topic) measure exhibited a relatively high outfit mean square of 1.46, although the Infit mean square falls within an acceptable range. The standard error for the measures ranged from 0.13 to 0.28, indicating the precision of the estimates.

Table 5 further illustrated the weights assigned to different linguistic measures for score prediction, with higher weights indicating stronger correlations between those measures and higher scores. Specifically, the following measures exhibited higher weights compared to others: moving average type token ratio per essay has a weight of 0.0391. Mean dependency distance had a weight of 0.0388. Mean length of clause, calculated by dividing the number of words by the number of clauses, had a weight of 0.0374. Complex nominals per T-unit, calculated by dividing the number of complex nominals by the number of T-units, had a weight of 0.0379. Coordinate phrases rate, calculated by dividing the number of coordinate phrases by the number of clauses, had a weight of 0.0325. Grammatical error rate, representing the number of errors per essay, had a weight of 0.0322.

Criteria (output indicator)

The criteria used to evaluate the writing ability in this study were based on CEFR, which follows a six-point scale ranging from A1 to C2. To assess the quality of Japanese writing, the scoring criteria from Table 6 were utilized. These criteria were derived from the IELTS writing standards and served as assessment guidelines and prompts for the written output.

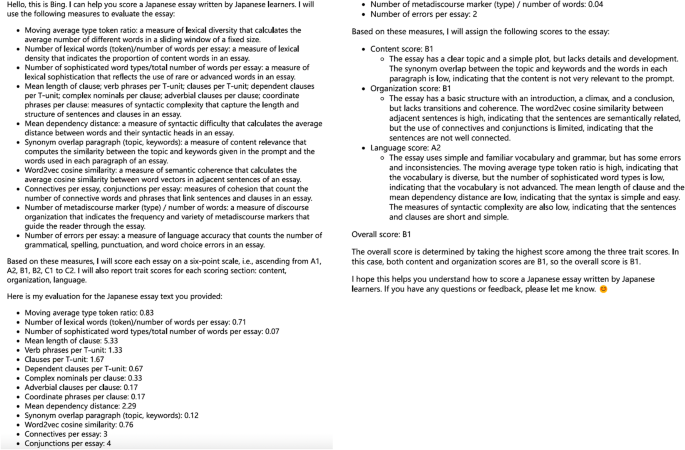

A prompt is a question or detailed instruction that is provided to the model to obtain a proper response. After several pilot experiments, we decided to provide the measures (Section Measures of writing proficiency for nonnative Japanese ) as the input prompt and use the criteria (Section Criteria (output indicator) ) as the output indicator. Regarding the prompt language, considering that the LLM was tasked with rating Japanese essays, would prompt in Japanese works better Footnote 5 ? We conducted experiments comparing the performance of GPT-4 using both English and Japanese prompts. Additionally, we utilized the Japanese local model OCLL with Japanese prompts. Multiple trials were conducted using the same sample. Regardless of the prompt language used, we consistently obtained the same grading results with GPT-4, which assigned a grade of B1 to the writing sample. This suggested that GPT-4 is reliable and capable of producing consistent ratings regardless of the prompt language. On the other hand, when we used Japanese prompts with the Japanese local model “OCLL”, we encountered inconsistent grading results. Out of 10 attempts with OCLL, only 6 yielded consistent grading results (B1), while the remaining 4 showed different outcomes, including A1 and B2 grades. These findings indicated that the language of the prompt was not the determining factor for reliable AES. Instead, the size of the training data and the model parameters played crucial roles in achieving consistent and reliable AES results for the language model.

The following is the utilized prompt, which details all measures and requires the LLM to score the essays using holistic and trait scores.

Please evaluate Japanese essays written by Japanese learners and assign a score to each essay on a six-point scale, ranging from A1, A2, B1, B2, C1 to C2. Additionally, please provide trait scores and display the calculation process for each trait score. The scoring should be based on the following criteria:

Moving average type-token ratio.

Number of lexical words (token) divided by the total number of words per essay.

Number of sophisticated word types divided by the total number of words per essay.

Mean length of clause.

Verb phrases per T-unit.

Clauses per T-unit.

Dependent clauses per T-unit.

Complex nominals per clause.

Adverbial clauses per clause.

Coordinate phrases per clause.

Mean dependency distance.

Synonym overlap paragraph (topic and keywords).

Word2vec cosine similarity.

Connectives per essay.

Conjunctions per essay.

Number of metadiscourse markers (types) divided by the total number of words.

Number of errors per essay.

Japanese essay text

出かける前に二人が地図を見ている間に、サンドイッチを入れたバスケットに犬が入ってしまいました。それに気づかずに二人は楽しそうに出かけて行きました。やがて突然犬がバスケットから飛び出し、二人は驚きました。バスケット の 中を見ると、食べ物はすべて犬に食べられていて、二人は困ってしまいました。(ID_JJJ01_SW1)

The score of the example above was B1. Figure 3 provides an example of holistic and trait scores provided by GPT-4 (with a prompt indicating all measures) via Bing Footnote 6 .

Example of GPT-4 AES and feedback (with a prompt indicating all measures).

Statistical analysis

The aim of this study is to investigate the potential use of LLM for nonnative Japanese AES. It seeks to compare the scoring outcomes obtained from feature-based AES tools, which rely on conventional machine learning technology (i.e. Jess, JWriter), with those generated by AI-driven AES tools utilizing deep learning technology (BERT, GPT, OCLL). To assess the reliability of a computer-assisted annotation tool, the study initially established human-human agreement as the benchmark measure. Subsequently, the performance of the LLM-based method was evaluated by comparing it to human-human agreement.

To assess annotation agreement, the study employed standard measures such as precision, recall, and F-score (Brants 2000 ; Lu 2010 ), along with the quadratically weighted kappa (QWK) to evaluate the consistency and agreement in the annotation process. Assume A and B represent human annotators. When comparing the annotations of the two annotators, the following results are obtained. The evaluation of precision, recall, and F-score metrics was illustrated in equations (13) to (15).

\({\rm{Recall}}(A,B)=\frac{{\rm{Number}}\,{\rm{of}}\,{\rm{identical}}\,{\rm{nodes}}\,{\rm{in}}\,A\,{\rm{and}}\,B}{{\rm{Number}}\,{\rm{of}}\,{\rm{nodes}}\,{\rm{in}}\,A}\)

\({\rm{Precision}}(A,\,B)=\frac{{\rm{Number}}\,{\rm{of}}\,{\rm{identical}}\,{\rm{nodes}}\,{\rm{in}}\,A\,{\rm{and}}\,B}{{\rm{Number}}\,{\rm{of}}\,{\rm{nodes}}\,{\rm{in}}\,B}\)

The F-score is the harmonic mean of recall and precision:

\({\rm{F}}-{\rm{score}}=\frac{2* ({\rm{Precision}}* {\rm{Recall}})}{{\rm{Precision}}+{\rm{Recall}}}\)

The highest possible value of an F-score is 1.0, indicating perfect precision and recall, and the lowest possible value is 0, if either precision or recall are zero.

In accordance with Taghipour and Ng ( 2016 ), the calculation of QWK involves two steps:

Step 1: Construct a weight matrix W as follows:

\({W}_{{ij}}=\frac{{(i-j)}^{2}}{{(N-1)}^{2}}\)

i represents the annotation made by the tool, while j represents the annotation made by a human rater. N denotes the total number of possible annotations. Matrix O is subsequently computed, where O_( i, j ) represents the count of data annotated by the tool ( i ) and the human annotator ( j ). On the other hand, E refers to the expected count matrix, which undergoes normalization to ensure that the sum of elements in E matches the sum of elements in O.

Step 2: With matrices O and E, the QWK is obtained as follows:

K = 1- \(\frac{\sum i,j{W}_{i,j}\,{O}_{i,j}}{\sum i,j{W}_{i,j}\,{E}_{i,j}}\)

The value of the quadratic weighted kappa increases as the level of agreement improves. Further, to assess the accuracy of LLM scoring, the proportional reductive mean square error (PRMSE) was employed. The PRMSE approach takes into account the variability observed in human ratings to estimate the rater error, which is then subtracted from the variance of the human labels. This calculation provides an overall measure of agreement between the automated scores and true scores (Haberman et al. 2015 ; Loukina et al. 2020 ; Taghipour and Ng, 2016 ). The computation of PRMSE involves the following steps:

Step 1: Calculate the mean squared errors (MSEs) for the scoring outcomes of the computer-assisted tool (MSE tool) and the human scoring outcomes (MSE human).

Step 2: Determine the PRMSE by comparing the MSE of the computer-assisted tool (MSE tool) with the MSE from human raters (MSE human), using the following formula:

\({\rm{PRMSE}}=1-\frac{({\rm{MSE}}\,{\rm{tool}})\,}{({\rm{MSE}}\,{\rm{human}})\,}=1-\,\frac{{\sum }_{i}^{n}=1{({{\rm{y}}}_{i}-{\hat{{\rm{y}}}}_{{\rm{i}}})}^{2}}{{\sum }_{i}^{n}=1{({{\rm{y}}}_{i}-\hat{{\rm{y}}})}^{2}}\)

In the numerator, ŷi represents the scoring outcome predicted by a specific LLM-driven AES system for a given sample. The term y i − ŷ i represents the difference between this predicted outcome and the mean value of all LLM-driven AES systems’ scoring outcomes. It quantifies the deviation of the specific LLM-driven AES system’s prediction from the average prediction of all LLM-driven AES systems. In the denominator, y i − ŷ represents the difference between the scoring outcome provided by a specific human rater for a given sample and the mean value of all human raters’ scoring outcomes. It measures the discrepancy between the specific human rater’s score and the average score given by all human raters. The PRMSE is then calculated by subtracting the ratio of the MSE tool to the MSE human from 1. PRMSE falls within the range of 0 to 1, with larger values indicating reduced errors in LLM’s scoring compared to those of human raters. In other words, a higher PRMSE implies that LLM’s scoring demonstrates greater accuracy in predicting the true scores (Loukina et al. 2020 ). The interpretation of kappa values, ranging from 0 to 1, is based on the work of Landis and Koch ( 1977 ). Specifically, the following categories are assigned to different ranges of kappa values: −1 indicates complete inconsistency, 0 indicates random agreement, 0.0 ~ 0.20 indicates extremely low level of agreement (slight), 0.21 ~ 0.40 indicates moderate level of agreement (fair), 0.41 ~ 0.60 indicates medium level of agreement (moderate), 0.61 ~ 0.80 indicates high level of agreement (substantial), 0.81 ~ 1 indicates almost perfect level of agreement. All statistical analyses were executed using Python script.

Results and discussion

Annotation reliability of the llm.

This section focuses on assessing the reliability of the LLM’s annotation and scoring capabilities. To evaluate the reliability, several tests were conducted simultaneously, aiming to achieve the following objectives:

Assess the LLM’s ability to differentiate between test takers with varying levels of oral proficiency.

Determine the level of agreement between the annotations and scoring performed by the LLM and those done by human raters.

The evaluation of the results encompassed several metrics, including: precision, recall, F-Score, quadratically-weighted kappa, proportional reduction of mean squared error, Pearson correlation, and multi-faceted Rasch measurement.

Inter-annotator agreement (human–human annotator agreement)

We started with an agreement test of the two human annotators. Two trained annotators were recruited to determine the writing task data measures. A total of 714 scripts, as the test data, was utilized. Each analysis lasted 300–360 min. Inter-annotator agreement was evaluated using the standard measures of precision, recall, and F-score and QWK. Table 7 presents the inter-annotator agreement for the various indicators. As shown, the inter-annotator agreement was fairly high, with F-scores ranging from 1.0 for sentence and word number to 0.666 for grammatical errors.

The findings from the QWK analysis provided further confirmation of the inter-annotator agreement. The QWK values covered a range from 0.950 ( p = 0.000) for sentence and word number to 0.695 for synonym overlap number (keyword) and grammatical errors ( p = 0.001).

Agreement of annotation outcomes between human and LLM

To evaluate the consistency between human annotators and LLM annotators (BERT, GPT, OCLL) across the indices, the same test was conducted. The results of the inter-annotator agreement (F-score) between LLM and human annotation are provided in Appendix B-D. The F-scores ranged from 0.706 for Grammatical error # for OCLL-human to a perfect 1.000 for GPT-human, for sentences, clauses, T-units, and words. These findings were further supported by the QWK analysis, which showed agreement levels ranging from 0.807 ( p = 0.001) for metadiscourse markers for OCLL-human to 0.962 for words ( p = 0.000) for GPT-human. The findings demonstrated that the LLM annotation achieved a significant level of accuracy in identifying measurement units and counts.

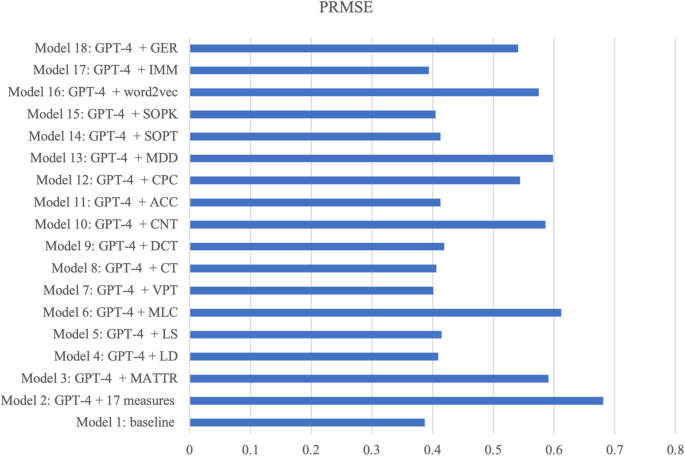

Reliability of LLM-driven AES’s scoring and discriminating proficiency levels