- Walden University

- Faculty Portal

Common Assignments: Literature Review Matrix

Literature review matrix.

As you read and evaluate your literature there are several different ways to organize your research. Courtesy of Dr. Gary Burkholder in the School of Psychology, these sample matrices are one option to help organize your articles. These documents allow you to compile details about your sources, such as the foundational theories, methodologies, and conclusions; begin to note similarities among the authors; and retrieve citation information for easy insertion within a document.

You can review the sample matrixes to see a completed form or download the blank matrix for your own use.

- Literature Review Matrix 1 This PDF file provides a sample literature review matrix.

- Literature Review Matrix 2 This PDF file provides a sample literature review matrix.

- Literature Review Matrix Template (Word)

- Literature Review Matrix Template (Excel)

Related Resources

Didn't find what you need? Email us at [email protected] .

- Previous Page: Commentary Versus Opinion

- Next Page: Professional Development Plans (PDPs)

- Office of Student Disability Services

Walden Resources

Departments.

- Academic Residencies

- Academic Skills

- Career Planning and Development

- Customer Care Team

- Field Experience

- Military Services

- Student Success Advising

- Writing Skills

Centers and Offices

- Center for Social Change

- Office of Academic Support and Instructional Services

- Office of Degree Acceleration

- Office of Research and Doctoral Services

- Office of Student Affairs

Student Resources

- Doctoral Writing Assessment

- Form & Style Review

- Quick Answers

- ScholarWorks

- SKIL Courses and Workshops

- Walden Bookstore

- Walden Catalog & Student Handbook

- Student Safety/Title IX

- Legal & Consumer Information

- Website Terms and Conditions

- Cookie Policy

- Accessibility

- Accreditation

- State Authorization

- Net Price Calculator

- Contact Walden

Walden University is a member of Adtalem Global Education, Inc. www.adtalem.com Walden University is certified to operate by SCHEV © 2024 Walden University LLC. All rights reserved.

Literature Review Basics

- What is a Literature Review?

- Synthesizing Research

- Using Research & Synthesis Tables

- Additional Resources

About the Research and Synthesis Tables

Research Tables and Synthesis Tables are useful tools for organizing and analyzing your research as you assemble your literature review. They represent two different parts of the review process: assembling relevant information and synthesizing it. Use a Research table to compile the main info you need about the items you find in your research -- it's a great thing to have on hand as you take notes on what you read! Then, once you've assembled your research, use the Synthesis table to start charting the similarities/differences and major themes among your collected items.

We've included an Excel file with templates for you to use below; the examples pictured on this page are snapshots from that file.

- Research and Synthesis Table Templates This Excel workbook includes simple templates for creating research tables and synthesis tables. Feel free to download and use!

Using the Research Table

This is an example of a research table, in which you provide a basic description of the most important features of the studies, articles, and other items you discover in your research. The table identifies each item according to its author/date of publication, its purpose or thesis, what type of work it is (systematic review, clinical trial, etc.), the level of evidence it represents (which tells you a lot about its impact on the field of study), and its major findings. Your job, when you assemble this information, is to develop a snapshot of what the research shows about the topic of your research question and assess its value (both for the purpose of your work and for general knowledge in the field).

Think of your work on the research table as the foundational step for your analysis of the literature, in which you assemble the information you'll be analyzing and lay the groundwork for thinking about what it means and how it can be used.

Using the Synthesis Table

This is an example of a synthesis table or synthesis matrix , in which you organize and analyze your research by listing each source and indicating whether a given finding or result occurred in a particular study or article ( each row lists an individual source, and each finding has its own column, in which X = yes, blank = no). You can also add or alter the columns to look for shared study populations, sort by level of evidence or source type, etc. The key here is to use the table to provide a simple representation of what the research has found (or not found, as the case may be). Think of a synthesis table as a tool for making comparisons, identifying trends, and locating gaps in the literature.

How do I know which findings to use, or how many to include? Your research question tells you which findings are of interest in your research, so work from your research question to decide what needs to go in each Finding header, and how many findings are necessary. The number is up to you; again, you can alter this table by adding or deleting columns to match what you're actually looking for in your analysis. You should also, of course, be guided by what's actually present in the material your research turns up!

- << Previous: Synthesizing Research

- Next: Additional Resources >>

- Last Updated: Sep 26, 2023 12:06 PM

- URL: https://usi.libguides.com/literature-review-basics

Research Analysis Table

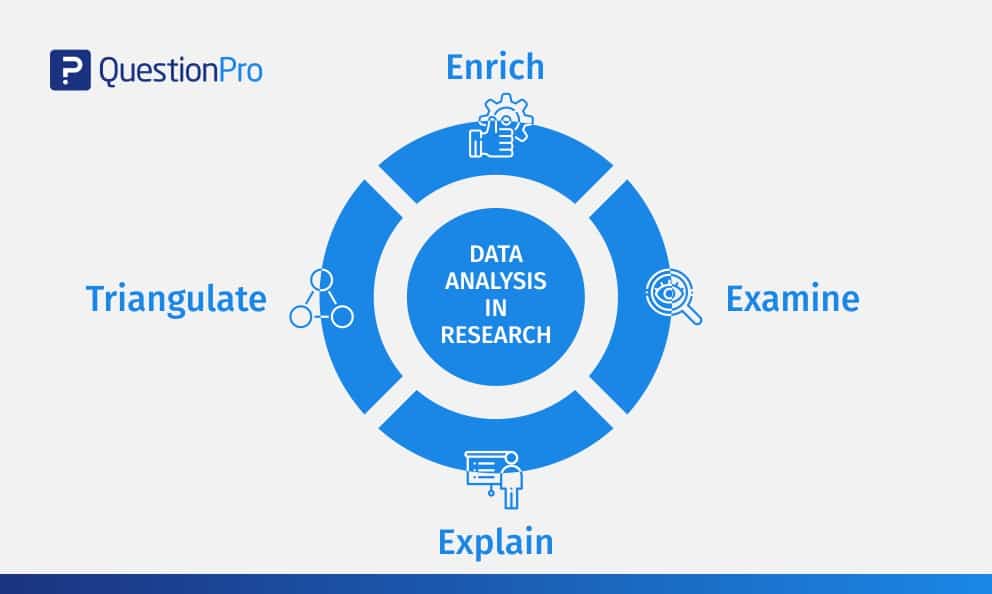

What is research analysis [ edit | edit source ].

After gathering important research data, a family historian or genealogist needs to think about the significant findings. Placing the data on a research log, such as the Strategic Research Log , is the first step. After that, for complex problems, it is useful to transfer (copy and paste) just the most important elements (including sources and links) to a place for careful study and pondering. This is, perhaps, the most important part of the research process, for here one determines the relationships of elements, choosing what appears to be true and casting out false theories.

The following table is an example of how to arrange data for critical analysis. Note that the columns from left to right suggest steps in the thought process:

This Research Analysis Table has been very beneficial to researchers. A sample of it is shown below.

To download, click File:Research Analysis Table.doc . Click on the link that appears and then click SAVE to keep the files on your own computer:

- Charts and Forms

Navigation menu

Search learning & how-to's.

- Privacy Policy

Home » Tables in Research Paper – Types, Creating Guide and Examples

Tables in Research Paper – Types, Creating Guide and Examples

Table of Contents

Tables in Research Paper

Definition:

In Research Papers , Tables are a way of presenting data and information in a structured format. Tables can be used to summarize large amounts of data or to highlight important findings. They are often used in scientific or technical papers to display experimental results, statistical analyses, or other quantitative information.

Importance of Tables in Research Paper

Tables are an important component of a research paper as they provide a clear and concise presentation of data, statistics, and other information that support the research findings . Here are some reasons why tables are important in a research paper:

- Visual Representation : Tables provide a visual representation of data that is easy to understand and interpret. They help readers to quickly grasp the main points of the research findings and draw their own conclusions.

- Organize Data : Tables help to organize large amounts of data in a systematic and structured manner. This makes it easier for readers to identify patterns and trends in the data.

- Clarity and Accuracy : Tables allow researchers to present data in a clear and accurate manner. They can include precise numbers, percentages, and other information that may be difficult to convey in written form.

- Comparison: Tables allow for easy comparison between different data sets or groups. This makes it easier to identify similarities and differences, and to draw meaningful conclusions from the data.

- Efficiency: Tables allow for a more efficient use of space in the research paper. They can convey a large amount of information in a compact and concise format, which saves space and makes the research paper more readable.

Types of Tables in Research Paper

Most common Types of Tables in Research Paper are as follows:

- Descriptive tables : These tables provide a summary of the data collected in the study. They are usually used to present basic descriptive statistics such as means, medians, standard deviations, and frequencies.

- Comparative tables : These tables are used to compare the results of different groups or variables. They may be used to show the differences between two or more groups or to compare the results of different variables.

- Correlation tables: These tables are used to show the relationships between variables. They may show the correlation coefficients between variables, or they may show the results of regression analyses.

- Longitudinal tables : These tables are used to show changes in variables over time. They may show the results of repeated measures analyses or longitudinal regression analyses.

- Qualitative tables: These tables are used to summarize qualitative data such as interview transcripts or open-ended survey responses. They may present themes or categories that emerged from the data.

How to Create Tables in Research Paper

Here are the steps to create tables in a research paper:

- Plan your table: Determine the purpose of the table and the type of information you want to include. Consider the layout and format that will best convey your information.

- Choose a table format : Decide on the type of table you want to create. Common table formats include basic tables, summary tables, comparison tables, and correlation tables.

- Choose a software program : Use a spreadsheet program like Microsoft Excel or Google Sheets to create your table. These programs allow you to easily enter and manipulate data, format the table, and export it for use in your research paper.

- Input data: Enter your data into the spreadsheet program. Make sure to label each row and column clearly.

- Format the table : Apply formatting options such as font, font size, font color, cell borders, and shading to make your table more visually appealing and easier to read.

- Insert the table into your paper: Copy and paste the table into your research paper. Make sure to place the table in the appropriate location and refer to it in the text of your paper.

- Label the table: Give the table a descriptive title that clearly and accurately summarizes the contents of the table. Also, include a number and a caption that explains the table in more detail.

- Check for accuracy: Review the table for accuracy and make any necessary changes before submitting your research paper.

Examples of Tables in Research Paper

Examples of Tables in the Research Paper are as follows:

Table 1: Demographic Characteristics of Study Participants

| Characteristic | N = 200 | % |

|---|---|---|

| Age (years) | ||

| Mean (SD) | 35.2 (8.6) | |

| Range | 21-57 | |

| Gender | ||

| Male | 92 | 46 |

| Female | 108 | 54 |

| Education | ||

| Less than high school | 20 | 10 |

| High school graduate | 60 | 30 |

| Some college | 70 | 35 |

| Bachelor’s degree or higher | 50 | 25 |

This table shows the demographic characteristics of 200 participants in a research study. The table includes information about age, gender, and education level. The mean age of the participants was 35.2 years with a standard deviation of 8.6 years, and the age range was between 21 and 57 years. The table also shows that 46% of the participants were male and 54% were female. In terms of education, 10% of the participants had less than a high school education, 30% were high school graduates, 35% had some college education, and 25% had a bachelor’s degree or higher.

Table 2: Summary of Key Findings

| Variable | Group 1 | Group 2 | Group 3 |

|---|---|---|---|

| Mean score | 76.3 | 84.7 | 72.1 |

| Standard deviation | 5.2 | 6.9 | 4.8 |

| t-value | -2.67* | 1.89 | -1.24 |

| p-value | < 0.01 | 0.06 | 0.22 |

This table summarizes the key findings of a study comparing three different groups on a particular variable. The table shows the mean score, standard deviation, t-value, and p-value for each group. The asterisk next to the t-value for Group 1 indicates that the difference between Group 1 and the other groups was statistically significant at p < 0.01, while the differences between Group 2 and Group 3 were not statistically significant.

Purpose of Tables in Research Paper

The primary purposes of including tables in a research paper are:

- To present data: Tables are an effective way to present large amounts of data in a clear and organized manner. Researchers can use tables to present numerical data, survey results, or other types of data that are difficult to represent in text.

- To summarize data: Tables can be used to summarize large amounts of data into a concise and easy-to-read format. Researchers can use tables to summarize the key findings of their research, such as descriptive statistics or the results of regression analyses.

- To compare data : Tables can be used to compare data across different variables or groups. Researchers can use tables to compare the characteristics of different study populations or to compare the results of different studies on the same topic.

- To enhance the readability of the paper: Tables can help to break up long sections of text and make the paper more visually appealing. By presenting data in a table, researchers can help readers to quickly identify the most important information and understand the key findings of the study.

Advantages of Tables in Research Paper

Some of the advantages of using tables in research papers include:

- Clarity : Tables can present data in a way that is easy to read and understand. They can help readers to quickly and easily identify patterns, trends, and relationships in the data.

- Efficiency: Tables can save space and reduce the need for lengthy explanations or descriptions of the data in the main body of the paper. This can make the paper more concise and easier to read.

- Organization: Tables can help to organize large amounts of data in a logical and meaningful way. This can help to reduce confusion and make it easier for readers to navigate the data.

- Comparison : Tables can be useful for comparing data across different groups, variables, or time periods. This can help to highlight similarities, differences, and changes over time.

- Visualization : Tables can also be used to visually represent data, making it easier for readers to see patterns and trends. This can be particularly useful when the data is complex or difficult to understand.

About the author

Muhammad Hassan

Researcher, Academic Writer, Web developer

You may also like

Research Paper Abstract – Writing Guide and...

References in Research – Types, Examples and...

How to Cite Research Paper – All Formats and...

Research Questions – Types, Examples and Writing...

Research Paper – Structure, Examples and Writing...

Data Verification – Process, Types and Examples

Log in using your username and password

- Search More Search for this keyword Advanced search

- Latest content

- Current issue

- Write for Us

- BMJ Journals

You are here

- Volume 24, Issue 2

- Five tips for developing useful literature summary tables for writing review articles

- Article Text

- Article info

- Citation Tools

- Rapid Responses

- Article metrics

- http://orcid.org/0000-0003-0157-5319 Ahtisham Younas 1 , 2 ,

- http://orcid.org/0000-0002-7839-8130 Parveen Ali 3 , 4

- 1 Memorial University of Newfoundland , St John's , Newfoundland , Canada

- 2 Swat College of Nursing , Pakistan

- 3 School of Nursing and Midwifery , University of Sheffield , Sheffield , South Yorkshire , UK

- 4 Sheffield University Interpersonal Violence Research Group , Sheffield University , Sheffield , UK

- Correspondence to Ahtisham Younas, Memorial University of Newfoundland, St John's, NL A1C 5C4, Canada; ay6133{at}mun.ca

https://doi.org/10.1136/ebnurs-2021-103417

Statistics from Altmetric.com

Request permissions.

If you wish to reuse any or all of this article please use the link below which will take you to the Copyright Clearance Center’s RightsLink service. You will be able to get a quick price and instant permission to reuse the content in many different ways.

Introduction

Literature reviews offer a critical synthesis of empirical and theoretical literature to assess the strength of evidence, develop guidelines for practice and policymaking, and identify areas for future research. 1 It is often essential and usually the first task in any research endeavour, particularly in masters or doctoral level education. For effective data extraction and rigorous synthesis in reviews, the use of literature summary tables is of utmost importance. A literature summary table provides a synopsis of an included article. It succinctly presents its purpose, methods, findings and other relevant information pertinent to the review. The aim of developing these literature summary tables is to provide the reader with the information at one glance. Since there are multiple types of reviews (eg, systematic, integrative, scoping, critical and mixed methods) with distinct purposes and techniques, 2 there could be various approaches for developing literature summary tables making it a complex task specialty for the novice researchers or reviewers. Here, we offer five tips for authors of the review articles, relevant to all types of reviews, for creating useful and relevant literature summary tables. We also provide examples from our published reviews to illustrate how useful literature summary tables can be developed and what sort of information should be provided.

Tip 1: provide detailed information about frameworks and methods

- Download figure

- Open in new tab

- Download powerpoint

Tabular literature summaries from a scoping review. Source: Rasheed et al . 3

The provision of information about conceptual and theoretical frameworks and methods is useful for several reasons. First, in quantitative (reviews synthesising the results of quantitative studies) and mixed reviews (reviews synthesising the results of both qualitative and quantitative studies to address a mixed review question), it allows the readers to assess the congruence of the core findings and methods with the adapted framework and tested assumptions. In qualitative reviews (reviews synthesising results of qualitative studies), this information is beneficial for readers to recognise the underlying philosophical and paradigmatic stance of the authors of the included articles. For example, imagine the authors of an article, included in a review, used phenomenological inquiry for their research. In that case, the review authors and the readers of the review need to know what kind of (transcendental or hermeneutic) philosophical stance guided the inquiry. Review authors should, therefore, include the philosophical stance in their literature summary for the particular article. Second, information about frameworks and methods enables review authors and readers to judge the quality of the research, which allows for discerning the strengths and limitations of the article. For example, if authors of an included article intended to develop a new scale and test its psychometric properties. To achieve this aim, they used a convenience sample of 150 participants and performed exploratory (EFA) and confirmatory factor analysis (CFA) on the same sample. Such an approach would indicate a flawed methodology because EFA and CFA should not be conducted on the same sample. The review authors must include this information in their summary table. Omitting this information from a summary could lead to the inclusion of a flawed article in the review, thereby jeopardising the review’s rigour.

Tip 2: include strengths and limitations for each article

Critical appraisal of individual articles included in a review is crucial for increasing the rigour of the review. Despite using various templates for critical appraisal, authors often do not provide detailed information about each reviewed article’s strengths and limitations. Merely noting the quality score based on standardised critical appraisal templates is not adequate because the readers should be able to identify the reasons for assigning a weak or moderate rating. Many recent critical appraisal checklists (eg, Mixed Methods Appraisal Tool) discourage review authors from assigning a quality score and recommend noting the main strengths and limitations of included studies. It is also vital that methodological and conceptual limitations and strengths of the articles included in the review are provided because not all review articles include empirical research papers. Rather some review synthesises the theoretical aspects of articles. Providing information about conceptual limitations is also important for readers to judge the quality of foundations of the research. For example, if you included a mixed-methods study in the review, reporting the methodological and conceptual limitations about ‘integration’ is critical for evaluating the study’s strength. Suppose the authors only collected qualitative and quantitative data and did not state the intent and timing of integration. In that case, the strength of the study is weak. Integration only occurred at the levels of data collection. However, integration may not have occurred at the analysis, interpretation and reporting levels.

Tip 3: write conceptual contribution of each reviewed article

While reading and evaluating review papers, we have observed that many review authors only provide core results of the article included in a review and do not explain the conceptual contribution offered by the included article. We refer to conceptual contribution as a description of how the article’s key results contribute towards the development of potential codes, themes or subthemes, or emerging patterns that are reported as the review findings. For example, the authors of a review article noted that one of the research articles included in their review demonstrated the usefulness of case studies and reflective logs as strategies for fostering compassion in nursing students. The conceptual contribution of this research article could be that experiential learning is one way to teach compassion to nursing students, as supported by case studies and reflective logs. This conceptual contribution of the article should be mentioned in the literature summary table. Delineating each reviewed article’s conceptual contribution is particularly beneficial in qualitative reviews, mixed-methods reviews, and critical reviews that often focus on developing models and describing or explaining various phenomena. Figure 2 offers an example of a literature summary table. 4

Tabular literature summaries from a critical review. Source: Younas and Maddigan. 4

Tip 4: compose potential themes from each article during summary writing

While developing literature summary tables, many authors use themes or subthemes reported in the given articles as the key results of their own review. Such an approach prevents the review authors from understanding the article’s conceptual contribution, developing rigorous synthesis and drawing reasonable interpretations of results from an individual article. Ultimately, it affects the generation of novel review findings. For example, one of the articles about women’s healthcare-seeking behaviours in developing countries reported a theme ‘social-cultural determinants of health as precursors of delays’. Instead of using this theme as one of the review findings, the reviewers should read and interpret beyond the given description in an article, compare and contrast themes, findings from one article with findings and themes from another article to find similarities and differences and to understand and explain bigger picture for their readers. Therefore, while developing literature summary tables, think twice before using the predeveloped themes. Including your themes in the summary tables (see figure 1 ) demonstrates to the readers that a robust method of data extraction and synthesis has been followed.

Tip 5: create your personalised template for literature summaries

Often templates are available for data extraction and development of literature summary tables. The available templates may be in the form of a table, chart or a structured framework that extracts some essential information about every article. The commonly used information may include authors, purpose, methods, key results and quality scores. While extracting all relevant information is important, such templates should be tailored to meet the needs of the individuals’ review. For example, for a review about the effectiveness of healthcare interventions, a literature summary table must include information about the intervention, its type, content timing, duration, setting, effectiveness, negative consequences, and receivers and implementers’ experiences of its usage. Similarly, literature summary tables for articles included in a meta-synthesis must include information about the participants’ characteristics, research context and conceptual contribution of each reviewed article so as to help the reader make an informed decision about the usefulness or lack of usefulness of the individual article in the review and the whole review.

In conclusion, narrative or systematic reviews are almost always conducted as a part of any educational project (thesis or dissertation) or academic or clinical research. Literature reviews are the foundation of research on a given topic. Robust and high-quality reviews play an instrumental role in guiding research, practice and policymaking. However, the quality of reviews is also contingent on rigorous data extraction and synthesis, which require developing literature summaries. We have outlined five tips that could enhance the quality of the data extraction and synthesis process by developing useful literature summaries.

- Aromataris E ,

- Rasheed SP ,

Twitter @Ahtisham04, @parveenazamali

Funding The authors have not declared a specific grant for this research from any funding agency in the public, commercial or not-for-profit sectors.

Competing interests None declared.

Patient consent for publication Not required.

Provenance and peer review Not commissioned; externally peer reviewed.

Read the full text or download the PDF:

How to structure your Table for Systematic Review and Meta-analysis

How to search keywords in Google scholar for your Research

Systematic review article andMeta-analysis: Main steps for Successful writing

According to the, a systematic review is “a scholarly method in which all empirical evidence that meets pre-specified eligibility requirements is gathered to address a particular research question.” It entails systematically identifying, selecting, synthesising, and evaluating primary research studies to produce a high-quality summary of a subject while addressing a pre-specified research question. A meta-analysis is a step forward from a systematic review in that it employs mathematical and statistical methods to summarise the results of studies included in the systematic review (1) .

Introduction

In some aspects, systematic reviews vary from conventional narrative reviews. Narrative reviews are mostly descriptive, do not require a systematic search of the literature, and concentrate on a subset of studies in a field selected based on availability or author preference. As a result, although narrative reviews are informative, they often include an element of selection bias. As the name implies, systematic reviews usually include a thorough and comprehensive plan and search strategy derived a priori to minimise bias by finding, evaluating, and synthesising all related studies on a given subject. A meta-analysis aspect is often used in systematic reviews, which entails using statistical techniques to synthesise data from several studies into a single quantitative estimation or summary effect size. It is a well-known and well-respected multinational non-profit organisation that promotes, funds, and disseminates systematic reviews and meta-analyses on the effectiveness of healthcare interventions (2) .

Need of systemic review and meta-analysis:

There are several reasons for performing a systematic review and meta-analysis:

- It may assist in resolving discrepancies in results published by individual studies that may include bias or errors.

- It may help identify areas in a field where there is a lack of evidence and areas where further research should be conducted.

- It allows the combination of findings from different studies, highlighting new findings relevant to practice or policy.

- It may be able to reduce the need for additional trials.

- Writing a systematic review and meta-analysis will help identify a researcher’s field of interest since they are published in high-impact journals and receive many citations (3) .

Phases to planning a systematic review and meta-analysis

The succeeding components to a successful systematic review and meta-analysis writing are:

- Formulate the Review Question

The first stage involves describing the review topic, formulating hypotheses, and developing a title for the review. It’s usually best to keep titles as short and descriptive as possible by following this formula: Intervention for those with a disease (e.g., Dialectical behaviour therapy for adolescent females with a borderline personality disorder). Since reviews published in other outlets do not need to be listed as such, they should state in the title that they are a systematic review and meta-analysis.

- Define inclusion and exclusion criteria

The PICO (or PICOC) acronym stands for population, intervention, comparison, outcomes (and context). It can help ensure that all main components are decided upon before beginning the study. Authors must, for example, choose their population age range, circumstances, results, and type(s) of interventions and control groups a priori. It’s also crucial to determine what types of experiments to include and exclude (e.g., RCTs only, RCTs and quasi-experimental designs, qualitative research), the minimum number of participants in each group, published and unpublished studies, and language restrictions.

- Develop a search strategy and locate studies

This is where a reference librarian can be particularly beneficial in assisting with the creation and execution of electronic searches. To recognise all applicable trials in a given region, it is essential to create a detailed list of key terms (i.e., “MeSH” terms) related to each component of PICOC. The secret to creating an effective search strategy is to strike a balance between sensitivity and precision.

- Selection of studies

After retrieving and reviewing a detailed list of abstracts, any studies that tend to satisfy inclusion requirements will be collected and thoroughly reviewed. To ensure inter-raterreliability, this procedure is usually carried out by at least two reviewers. It is suggested that authors maintain a list of all checked research, including reasons for inclusion or exclusion. It might be possible to hire study authors to collect missing data for data pooling (e.g., means, standard deviations). It’s also possible that translations will be needed.

- Extract data

To organise the information extracted from each reviewed study (e.g., authors, publication year, number of participants, age range, study design, results, included/excluded), building and using a basic data extraction type or chart can be beneficial. Data extraction by at least two reviewers is necessary to ensure inter-rater reliability and prevent data entry errors.

Table: 1 outline for systemic review and meta-analysis

| Background | |

| Objectives | |

| Review questions | Types of patients, interventions, outcomes and studies |

| Search strategy | Databases, study period, grey literature |

| Review Methods | |

| Databases and article sources | |

| Screening | |

| Data extraction | |

| Assessment of data quality | |

| Data analysis | |

| References |

- Assess study quality

In recent years, there has been a push to improve the consistency of each RCT included in systematic reviews. Double-blinding, which is acceptable for clinical trials but not for psychological or non-pharmacological treatments, significantly impacts this metric. Other more detailed guidelines and criteria, such as the Consolidated Standards of Reporting Trials (CONSORT), as well as articles with recommendations for improving quality in RCTs and meta-analyses for psychological intervention, are available (4) .

- Analyse and Interpret results

The Review Manager (RevMan) software, endorsed by the Cochrane Collaboration, is one example of a statistical programme that can measure effect sizes for meta-analysis . The effect sizes are given, along with a 95 percent confidence interval (CI) range, and are presented in both quantitative and graphical form (e.g., forest plots). Each trial is visually represented as a horizontal diamond shape in forest plots. The middle represents the effect size (e.g., SMD) and the endpoints representing both ends of the CI.

- Disseminate findings

Since the Cochrane Collaboration’s reviews are published in the online Cochrane Database of Systematic Reviews, they are often lengthy and comprehensive. As a result, it is possible and encouraged to publish abbreviated versions of the review in other applicable scholarly journals; indeed, engaging in a review update or joining a well-established review team may be a beneficial way to get involved in the systematic review process .

Future scope

The systematic review’s findings should be discussed in terms of the strength of evidence and shortcomings of the initial research used for the review. It’s also necessary to discuss the review’s weaknesses, the results’ applicability (generalizability), and the findings’ implications for patient care, public health, and future clinical research (5) .

The steps of a systematic review/meta-analysis include developing a research question and validating it, forming criteria, searching databases, importing all results to a library and exporting to an excel sheet, protocol writing and registration, title and abstract screening, full-text screening, manual searching, extracting data and assessing its quality, data checking, and conducting statistics. The PRISMA or Meta-analysis must be used to write up the systematic study and meta-analysis. This is a reporting checklist for systematic literature reviews and meta-analyses that specifies what information should be included in each portion of a high-quality systematic review (6) .

- Alonso Debreczeni, Felicia, and Phoebe E. Bailey. “A systematic review and meta-analysis of subjective age and the association with cognition, subjective well-being, and depression.” The Journals of Gerontology: Series B 76.3 (2021): 471-482.

- Vasconcellos, Diego, et al. “Self-determination theory applied to physical education: A systematic review and meta-analysis.” Journal of Educational Psychology 112.7 (2020): 1444.

- Geary, William L., et al. “Predator responses to fire: A global systematic review and meta‐analysis.” Journal of Animal Ecology 89.4 (2020): 955-971.

- Donald, James N., et al. “Mindfulness and its association with varied types of motivation: A systematic review and meta-analysis using self-determination theory.” Personality and Social Psychology Bulletin 46.7 (2020): 1121-1138.

- Madigan, Sheri, et al. “Associations between screen use and child language skills: A systematic review and meta-analysis.” JAMA paediatrics 174.7 (2020): 665-675.

- McArthur, Genevieve M., et al. “Self-concept in poor readers: a systematic review and meta-analysis.” PeerJ 8 (2020): e8772.

pubrica-academy

Related posts.

From Lab to Literature: Exploring the Roadblocks Physicians Navigate in Publishing Their Research

Levels of evidence-based medicine in mass gathering public health and emergency medicine literature review

A clinical literature review of effective supervision in clinical practice settings

Comments are closed.

- Affiliate Program

- UNITED STATES

- 台灣 (TAIWAN)

- TÜRKIYE (TURKEY)

- Academic Editing Services

- - Research Paper

- - Journal Manuscript

- - Dissertation

- - College & University Assignments

- Admissions Editing Services

- - Application Essay

- - Personal Statement

- - Recommendation Letter

- - Cover Letter

- - CV/Resume

- Business Editing Services

- - Business Documents

- - Report & Brochure

- - Website & Blog

- Writer Editing Services

- - Script & Screenplay

- Our Editors

- Client Reviews

- Editing & Proofreading Prices

- Wordvice Points

- Partner Discount

- Plagiarism Checker

- APA Citation Generator

- MLA Citation Generator

- Chicago Citation Generator

- Vancouver Citation Generator

- - APA Style

- - MLA Style

- - Chicago Style

- - Vancouver Style

- Writing & Editing Guide

- Academic Resources

- Admissions Resources

How to Use Tables & Graphs in a Research Paper

It might not seem very relevant to the story and outcome of your study, but how you visually present your experimental or statistical results can play an important role during the review and publication process of your article. A presentation that is in line with the overall logical flow of your story helps you guide the reader effectively from your introduction to your conclusion.

If your results (and the way you organize and present them) don’t follow the story you outlined in the beginning, then you might confuse the reader and they might end up doubting the validity of your research, which can increase the chance of your manuscript being rejected at an early stage. This article illustrates the options you have when organizing and writing your results and will help you make the best choice for presenting your study data in a research paper.

Why does data visualization matter?

Your data and the results of your analysis are the core of your study. Of course, you need to put your findings and what you think your findings mean into words in the text of your article. But you also need to present the same information visually, in the results section of your manuscript, so that the reader can follow and verify that they agree with your observations and conclusions.

The way you visualize your data can either help the reader to comprehend quickly and identify the patterns you describe and the predictions you make, or it can leave them wondering what you are trying to say or whether your claims are supported by evidence. Different types of data therefore need to be presented in different ways, and whatever way you choose needs to be in line with your story.

Another thing to keep in mind is that many journals have specific rules or limitations (e.g., how many tables and graphs you are allowed to include, what kind of data needs to go on what kind of graph) and specific instructions on how to generate and format data tables and graphs (e.g., maximum number of subpanels, length and detail level of tables). In the following, we will go into the main points that you need to consider when organizing your data and writing your result section .

Table of Contents:

Types of data , when to use data tables .

- When to Use Data Graphs

Common Types of Graphs in Research Papers

Journal guidelines: what to consider before submission.

Depending on the aim of your research and the methods and procedures you use, your data can be quantitative or qualitative. Quantitative data, whether objective (e.g., size measurements) or subjective (e.g., rating one’s own happiness on a scale), is what is usually collected in experimental research. Quantitative data are expressed in numbers and analyzed with the most common statistical methods. Qualitative data, on the other hand, can consist of case studies or historical documents, or it can be collected through surveys and interviews. Qualitative data are expressed in words and needs to be categorized and interpreted to yield meaningful outcomes.

Quantitative data example: Height differences between two groups of participants Qualitative data example: Subjective feedback on the food quality in the work cafeteria

Depending on what kind of data you have collected and what story you want to tell with it, you have to find the best way of organizing and visualizing your results.

When you want to show the reader in detail how your independent and dependent variables interact, then a table (with data arranged in columns and rows) is your best choice. In a table, readers can look up exact values, compare those values between pairs or groups of related measurements (e.g., growth rates or outcomes of a medical procedure over several years), look at ranges and intervals, and select specific factors to search for patterns.

Tables are not restrained to a specific type of data or measurement. Since tables really need to be read, they activate the verbal system. This requires focus and some time (depending on how much data you are presenting), but it gives the reader the freedom to explore the data according to their own interest. Depending on your audience, this might be exactly what your readers want. If you explain and discuss all the variables that your table lists in detail in your manuscript text, then you definitely need to give the reader the chance to look at the details for themselves and follow your arguments. If your analysis only consists of simple t-tests to assess differences between two groups, you can report these results in the text (in this case: mean, standard deviation, t-statistic, and p-value), and do not necessarily need to include a table that simply states the same numbers again. If you did extensive analyses but focus on only part of that data (and clearly explain why, so that the reader does not think you forgot to talk about the rest), then a graph that illustrates and emphasizes the specific result or relationship that you consider the main point of your story might be a better choice.

When to Use Data Graphs

Graphs are a visual display of information and show the overall shape of your results rather than the details. If used correctly, a visual representation helps your (or your reader’s) brain to quickly understand large amounts of data and spot patterns, trends, and exceptions or outliers. Graphs also make it easier to illustrate relationships between entire data sets. This is why, when you analyze your results, you usually don’t just look at the numbers and the statistical values of your tests, but also at histograms, box plots, and distribution plots, to quickly get an overview of what is going on in your data.

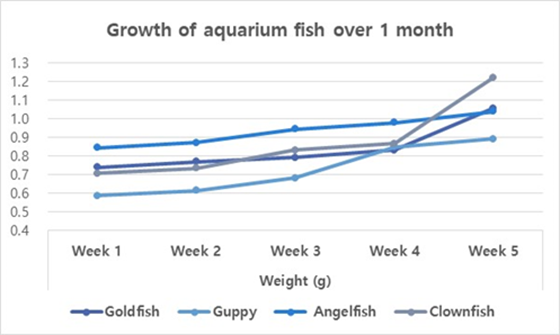

Line graphs

When you want to illustrate a change over a continuous range or time, a line graph is your best choice. Changes in different groups or samples over the same range or time can be shown by lines of different colors or with different symbols.

Example: Let’s collapse across the different food types and look at the growth of our four fish species over time.

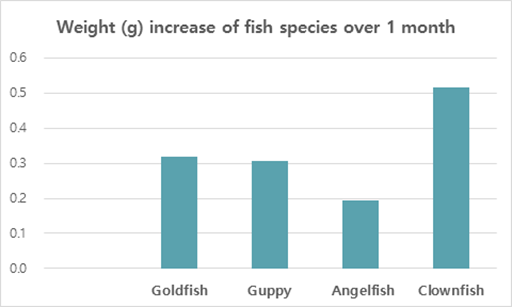

You should use a bar graph when your data is not continuous but divided into categories that are not necessarily connected, such as different samples, methods, or setups. In our example, the different fish types or the different types of food are such non-continuous categories.

Example: Let’s collapse across the food types again and also across time, and only compare the overall weight increase of our four fish types at the end of the feeding period.

Scatter plots

Scatter plots can be used to illustrate the relationship between two variables — but note that both have to be continuous. The following example displays “fish length” as an additional variable–none of the variables in our table above (fish type, fish food, time) are continuous, and they can therefore not be used for this kind of graph.

As you see, these example graphs all contain less data than the table above, but they lead the reader to exactly the key point of your results or the finding you want to emphasize. If you let your readers search for these observations in a big table full of details that are not necessarily relevant to the claims you want to make, you can create unnecessary confusion. Most journals allow you to provide bigger datasets as supplementary information, and some even require you to upload all your raw data at submission. When you write up your manuscript, however, matching the data presentation to the storyline is more important than throwing everything you have at the reader.

Don’t forget that every graph needs to have clear x and y axis labels , a title that summarizes what is shown above the figure, and a descriptive legend/caption below. Since your caption needs to stand alone and the reader needs to be able to understand it without looking at the text, you need to explain what you measured/tested and spell out all labels and abbreviations you use in any of your graphs once more in the caption (even if you think the reader “should” remember everything by now, make it easy for them and guide them through your results once more). Have a look at this article if you need help on how to write strong and effective figure legends .

Even if you have thought about the data you have, the story you want to tell, and how to guide the reader most effectively through your results, you need to check whether the journal you plan to submit to has specific guidelines and limitations when it comes to tables and graphs. Some journals allow you to submit any tables and graphs initially (as long as tables are editable (for example in Word format, not an image) and graphs of high enough resolution.

Some others, however, have very specific instructions even at the submission stage, and almost all journals will ask you to follow their formatting guidelines once your manuscript is accepted. The closer your figures are already to those guidelines, the faster your article can be published. This PLOS One Figure Preparation Checklist is a good example of how extensive these instructions can be – don’t wait until the last minute to realize that you have to completely reorganize your results because your target journal does not accept tables above a certain length or graphs with more than 4 panels per figure.

Some things you should always pay attention to (and look at already published articles in the same journal if you are unsure or if the author instructions seem confusing) are the following:

- How many tables and graphs are you allowed to include?

- What file formats are you allowed to submit?

- Are there specific rules on resolution/dimension/file size?

- Should your figure files be uploaded separately or placed into the text?

- If figures are uploaded separately, do the files have to be named in a specific way?

- Are there rules on what fonts to use or to avoid and how to label subpanels?

- Are you allowed to use color? If not, make sure your data sets are distinguishable.

If you are dealing with digital image data, then it might also be a good idea to familiarize yourself with the difference between “adjusting” for clarity and visibility and image manipulation, which constitutes scientific misconduct . And to fully prepare your research paper for publication before submitting it, be sure to receive proofreading services , including journal manuscript editing and research paper editing , from Wordvice’s professional academic editors .

Effective Use of Tables and Figures in Research Papers

Research papers are often based on copious amounts of data that can be summarized and easily read through tables and graphs. When writing a research paper , it is important for data to be presented to the reader in a visually appealing way. The data in figures and tables, however, should not be a repetition of the data found in the text. There are many ways of presenting data in tables and figures, governed by a few simple rules. An APA research paper and MLA research paper both require tables and figures, but the rules around them are different. When writing a research paper, the importance of tables and figures cannot be underestimated. How do you know if you need a table or figure? The rule of thumb is that if you cannot present your data in one or two sentences, then you need a table .

Using Tables

Tables are easily created using programs such as Excel. Tables and figures in scientific papers are wonderful ways of presenting data. Effective data presentation in research papers requires understanding your reader and the elements that comprise a table. Tables have several elements, including the legend, column titles, and body. As with academic writing, it is also just as important to structure tables so that readers can easily understand them. Tables that are disorganized or otherwise confusing will make the reader lose interest in your work.

- Title: Tables should have a clear, descriptive title, which functions as the “topic sentence” of the table. The titles can be lengthy or short, depending on the discipline.

- Column Titles: The goal of these title headings is to simplify the table. The reader’s attention moves from the title to the column title sequentially. A good set of column titles will allow the reader to quickly grasp what the table is about.

- Table Body: This is the main area of the table where numerical or textual data is located. Construct your table so that elements read from up to down, and not across.

Related: Done organizing your research data effectively in tables? Check out this post on tips for citing tables in your manuscript now!

The placement of figures and tables should be at the center of the page. It should be properly referenced and ordered in the number that it appears in the text. In addition, tables should be set apart from the text. Text wrapping should not be used. Sometimes, tables and figures are presented after the references in selected journals.

Using Figures

Figures can take many forms, such as bar graphs, frequency histograms, scatterplots, drawings, maps, etc. When using figures in a research paper, always think of your reader. What is the easiest figure for your reader to understand? How can you present the data in the simplest and most effective way? For instance, a photograph may be the best choice if you want your reader to understand spatial relationships.

- Figure Captions: Figures should be numbered and have descriptive titles or captions. The captions should be succinct enough to understand at the first glance. Captions are placed under the figure and are left justified.

- Image: Choose an image that is simple and easily understandable. Consider the size, resolution, and the image’s overall visual attractiveness.

- Additional Information: Illustrations in manuscripts are numbered separately from tables. Include any information that the reader needs to understand your figure, such as legends.

Common Errors in Research Papers

Effective data presentation in research papers requires understanding the common errors that make data presentation ineffective. These common mistakes include using the wrong type of figure for the data. For instance, using a scatterplot instead of a bar graph for showing levels of hydration is a mistake. Another common mistake is that some authors tend to italicize the table number. Remember, only the table title should be italicized . Another common mistake is failing to attribute the table. If the table/figure is from another source, simply put “ Note. Adapted from…” underneath the table. This should help avoid any issues with plagiarism.

Using tables and figures in research papers is essential for the paper’s readability. The reader is given a chance to understand data through visual content. When writing a research paper, these elements should be considered as part of good research writing. APA research papers, MLA research papers, and other manuscripts require visual content if the data is too complex or voluminous. The importance of tables and graphs is underscored by the main purpose of writing, and that is to be understood.

Frequently Asked Questions

"Consider the following points when creating figures for research papers: Determine purpose: Clarify the message or information to be conveyed. Choose figure type: Select the appropriate type for data representation. Prepare and organize data: Collect and arrange accurate and relevant data. Select software: Use suitable software for figure creation and editing. Design figure: Focus on clarity, labeling, and visual elements. Create the figure: Plot data or generate the figure using the chosen software. Label and annotate: Clearly identify and explain all elements in the figure. Review and revise: Verify accuracy, coherence, and alignment with the paper. Format and export: Adjust format to meet publication guidelines and export as suitable file."

"To create tables for a research paper, follow these steps: 1) Determine the purpose and information to be conveyed. 2) Plan the layout, including rows, columns, and headings. 3) Use spreadsheet software like Excel to design and format the table. 4) Input accurate data into cells, aligning it logically. 5) Include column and row headers for context. 6) Format the table for readability using consistent styles. 7) Add a descriptive title and caption to summarize and provide context. 8) Number and reference the table in the paper. 9) Review and revise for accuracy and clarity before finalizing."

"Including figures in a research paper enhances clarity and visual appeal. Follow these steps: Determine the need for figures based on data trends or to explain complex processes. Choose the right type of figure, such as graphs, charts, or images, to convey your message effectively. Create or obtain the figure, properly citing the source if needed. Number and caption each figure, providing concise and informative descriptions. Place figures logically in the paper and reference them in the text. Format and label figures clearly for better understanding. Provide detailed figure captions to aid comprehension. Cite the source for non-original figures or images. Review and revise figures for accuracy and consistency."

"Research papers use various types of tables to present data: Descriptive tables: Summarize main data characteristics, often presenting demographic information. Frequency tables: Display distribution of categorical variables, showing counts or percentages in different categories. Cross-tabulation tables: Explore relationships between categorical variables by presenting joint frequencies or percentages. Summary statistics tables: Present key statistics (mean, standard deviation, etc.) for numerical variables. Comparative tables: Compare different groups or conditions, displaying key statistics side by side. Correlation or regression tables: Display results of statistical analyses, such as coefficients and p-values. Longitudinal or time-series tables: Show data collected over multiple time points with columns for periods and rows for variables/subjects. Data matrix tables: Present raw data or matrices, common in experimental psychology or biology. Label tables clearly, include titles, and use footnotes or captions for explanations."

Enago is a very useful site. It covers nearly all topics of research writing and publishing in a simple, clear, attractive way. Though I’m a journal editor having much knowledge and training in these issues, I always find something new in this site. Thank you

“Thank You, your contents really help me :)”

Rate this article Cancel Reply

Your email address will not be published.

Enago Academy's Most Popular Articles

- Reporting Research

Explanatory & Response Variable in Statistics — A quick guide for early career researchers!

Often researchers have a difficult time choosing the parameters and variables (like explanatory and response…

- Manuscript Preparation

- Publishing Research

How to Use Creative Data Visualization Techniques for Easy Comprehension of Qualitative Research

“A picture is worth a thousand words!”—an adage used so often stands true even whilst…

- Figures & Tables

Effective Use of Statistics in Research – Methods and Tools for Data Analysis

Remember that impending feeling you get when you are asked to analyze your data! Now…

- Old Webinars

- Webinar Mobile App

SCI中稿技巧: 提升研究数据的说服力

如何寻找原创研究课题 快速定位目标文献的有效搜索策略 如何根据期刊指南准备手稿的对应部分 论文手稿语言润色实用技巧分享,快速提高论文质量

Distill: A Journal With Interactive Images for Machine Learning Research

Research is a wide and extensive field of study. This field has welcomed a plethora…

Explanatory & Response Variable in Statistics — A quick guide for early career…

How to Create and Use Gantt Charts

Sign-up to read more

Subscribe for free to get unrestricted access to all our resources on research writing and academic publishing including:

- 2000+ blog articles

- 50+ Webinars

- 10+ Expert podcasts

- 50+ Infographics

- 10+ Checklists

- Research Guides

We hate spam too. We promise to protect your privacy and never spam you.

I am looking for Editing/ Proofreading services for my manuscript Tentative date of next journal submission:

What would be most effective in reducing research misconduct?

Presentation of Quantitative Research Findings

- First Online: 30 August 2023

Cite this chapter

- Jan Koetsenruijter 3 &

- Michel Wensing 3

564 Accesses

Valid and clear presentation of research findings is an important aspect of health services research. This chapter presents recommendations and examples for the presentation of quantitative findings, focusing on tables and graphs. The recommendations in this field are largely experience-based. Tables and graphs should be tailored to the needs of the target audience, which partly reflects conventional formats. In many cases, simple formats of tables and graphs with precise information are recommended. Misleading presentation formats must be avoided, and uncertainty of findings should be clearly conveyed in the presentation. Research showed that the latter does not reduce trust in the presented data.

This is a preview of subscription content, log in via an institution to check access.

Access this chapter

Subscribe and save.

- Get 10 units per month

- Download Article/Chapter or Ebook

- 1 Unit = 1 Article or 1 Chapter

- Cancel anytime

- Available as PDF

- Read on any device

- Instant download

- Own it forever

- Available as EPUB and PDF

- Durable hardcover edition

- Dispatched in 3 to 5 business days

- Free shipping worldwide - see info

Tax calculation will be finalised at checkout

Purchases are for personal use only

Institutional subscriptions

Similar content being viewed by others

Quantitative Methods in Global Health Research

Quantitative Research

Recommended readings.

Designing tables: (Boers, 2018b) (from an article series in BMJ Heart).

Google Scholar

Practical guidelines for designing graphs: http://www.perceptualedge.com (Stephen Few).

Aronson, J. K., Barends, E., Boruch, R., et al. (2019). Key concepts for making informed choices. Nature , 572(7769), 303–306.

Boers, M. (2018a). Designing effective graphs to get your message across. Annals of the Rheumatic Diseases , 77(6), 833–839.

Boers, M. (2018b). Graphics and statistics for cardiology: designing effective tables for presentation and publication. Heart , 104(3), 192–200.

Bramwell, R., West, H., & Salmon, P. (2006). Health professionals’ and service users’ interpretation of screening test results: experimental study. British Medical Journal , 333 (7562), 284.

Cukier, K. (2010). Data, Data Everywhere: A Special Report on Managing Information . The Economist, 394, 3–5.

Duke, S. P., Bancken, F., Crowe, B., et al. (2015). Seeing is believing: Good graphic design principles for medical research. Statistics in Medicine , 34 (22), 3040–3059.

Few, S. (2005). Effectively Communicating Numbers: Selecting the Best Means and Manner of Display [White Paper]. Retrieved December 8, 2021, from http://www.perceptualedge.com/articles/Whitepapers/Communicating_Numbers.pdf

Fischhoff, B., & Davis, A. L. (2014). Communicating scientific uncertainty. Proceedings of the National Academy of Sciences , 111 (supplement_4), 13664–13671.

Gustafson, A., & Rice, R. E. (2020). A review of the effects of uncertainty in public science communication. Public Understanding of Science , 29 (6), 614–633.

Han, P. K. J., Klein, W. M. P., Lehman, T., et al. (2011). Communication of uncertainty regarding individualized cancer risk estimates: Effects and influential factors. Medical Decision Making , 31 (2), 354–366.

Johnston, B. C., Alonso-Coello, P., Friedrich, J. O., et al. (2016). Do clinicians understand the size of treatment effects? A randomized survey across 8 countries. Canadian Medical Association Journal , 188(1), 25–32.

Kelleher, C., & Wagener, T. (2011). Ten guidelines for effective data visualization in scientific publications. Environmental Modelling and Software , 26(6), 822–827.

Khasnabish, S., Burns, Z., Couch, M., et al. (2020). Best practices for data visualization: Creating and evaluating a report for an evidence-based fall prevention program. Journal of the American Medical Informatics Association , 27 (2), 308–314.

Lavis, J., Davies, H. T. O., Oxman, A., et al. (2005). Towards systematic reviews that inform health care management and policy-making. Journal of Health Services Research and Policy . 35–48.

Lopez, K. D., Wilkie, D. J., Yao, Y., et al. (2016). Nurses’ numeracy and graphical literacy: Informing studies of clinical decision support interfaces. Journal of Nursing Care Quality , 31 (2), 124–130.

Norton, E. C., Dowd, B. E., & Maciejewski, M. L. (2018). Odds ratios-current best practice and use. JAMA – Journal of the American Medical Association , 320 (1), 84–85.

Oudhoff, J. P., & Timmermans, D. R. M. (2015). The effect of different graphical and numerical likelihood formats on perception of likelihood and choice. Medical Decision Making , 35(4), 487–500.

Rougier, N. P., Droettboom, M., & Bourne, P. E. (2014). Ten simple rules for better figures. PLoS Computational Biology , 10 (9), 1–7.

Schmidt, C. O., & Kohlmann, T. (2008). Risk quantification in epidemiologic studies. International Journal of Public Health , 53 (2), 118–119.

Springer (2022). Writing a Journal Manuscript: Figures and tables. Retrieved April 10, 2022, from https://www.springer.com/gp/authors-editors/authorandreviewertutorials/writing-a-journal-manuscript/figures-and-tables/10285530

Trevena, L. J., Zikmund-Fisher, B. J., Edwards, A., et al. (2013). Presenting quantitative information about decision outcomes: A risk communication primer for patient decision aid developers. BMC Medical Informatics and Decision Making , 13 (SUPPL. 2), 1–15.

Tufte, E. R. (1983). The visual display of quantitative information. https://www.edwardtufte.com/tufte/books_vdqi

Wensing, M., Szecsenyi, J., Stock, C., et al. (2017). Evaluation of a program to strengthen general practice care for patients with chronic disease in Germany. BMC Health Services Research , 17, 62.

Wronski, P., Wensing, M., Ghosh, S., et al. (2021). Use of a quantitative data report in a hypothetical decision scenario for health policymaking: a computer-assisted laboratory study. BMC Medical Informatics and Decision Making , 21 (1), 32.

Download references

Author information

Authors and affiliations.

Department of General Practice and Health Services Research, Heidelberg University Hospital, Heidelberg, Germany

Jan Koetsenruijter & Michel Wensing

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Jan Koetsenruijter .

Editor information

Editors and affiliations.

Michel Wensing

Charlotte Ullrich

Rights and permissions

Reprints and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this chapter

Koetsenruijter, J., Wensing, M. (2023). Presentation of Quantitative Research Findings. In: Wensing, M., Ullrich, C. (eds) Foundations of Health Services Research. Springer, Cham. https://doi.org/10.1007/978-3-031-29998-8_5

Download citation

DOI : https://doi.org/10.1007/978-3-031-29998-8_5

Published : 30 August 2023

Publisher Name : Springer, Cham

Print ISBN : 978-3-031-29997-1

Online ISBN : 978-3-031-29998-8

eBook Packages : Medicine Medicine (R0)

Share this chapter

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Publish with us

Policies and ethics

- Find a journal

- Track your research

Sample Tables

These sample tables illustrate how to set up tables in APA Style . When possible, use a canonical, or standard, format for a table rather than inventing your own format. The use of standard formats helps readers know where to look for information.

There are many ways to make a table, and the samples shown on this page represent only some of the possibilities. The samples show the following options:

- The sample factor analysis table shows how to include a copyright attribution in a table note when you have reprinted or adapted a copyrighted table from a scholarly work such as a journal article (the format of the copyright attribution will vary depending on the source of the table).

- The sample regression table shows how to include confidence intervals in separate columns; it is also possible to place confidence intervals in square brackets in a single column (an example of this is provided in the Publication Manual ).

- The sample qualitative table and the sample mixed methods table demonstrate how to use left alignment within the table body to improve readability when the table contains lots of text.

Use these links to go directly to the sample tables:

Sample demographic characteristics table

Sample results of several t tests table, sample correlation table, sample analysis of variance (anova) table, sample factor analysis table, sample regression table, sample qualitative table with variable descriptions, sample mixed methods table.

These sample tables are also available as a downloadable Word file (DOCX, 37KB) . For more sample tables, see the Publication Manual (7th ed.) as well as published articles in your field.

Sample tables are covered in the seventh edition APA Style manuals in the Publication Manual Section 7.21 and the Concise Guide Section 7.21

Related handout

- Student Paper Setup Guide (PDF, 3MB)

Sociodemographic Characteristics of Participants at Baseline

| Baseline characteristic | Guided self-help | Unguided self-help | Wait-list control | Full sample | ||||

| Gender | ||||||||

| Female | 25 | 50 | 20 | 40 | 23 | 46 | 68 | 45 |

| Male | 25 | 50 | 30 | 60 | 27 | 54 | 82 | 55 |

| Marital status | ||||||||

| Single | 13 | 26 | 11 | 22 | 17 | 34 | 41 | 27 |

| Married/partnered | 35 | 70 | 38 | 76 | 28 | 56 | 101 | 67 |

| Divorced/widowed | 1 | 2 | 1 | 2 | 4 | 8 | 6 | 4 |

| Other | 1 | 1 | 0 | 0 | 1 | 2 | 2 | 1 |

| Children | 26 | 52 | 26 | 52 | 22 | 44 | 74 | 49 |

| Cohabitating | 37 | 74 | 36 | 72 | 26 | 52 | 99 | 66 |

| Highest educational level | ||||||||

| Middle school | 0 | 0 | 1 | 2 | 1 | 2 | 2 | 1 |

| High school/some college | 22 | 44 | 17 | 34 | 13 | 26 | 52 | 35 |

| University or postgraduate degree | 28 | 56 | 32 | 64 | 36 | 72 | 96 | 64 |

| Employment | ||||||||

| Unemployed | 3 | 6 | 5 | 10 | 2 | 4 | 10 | 7 |

| Student | 8 | 16 | 7 | 14 | 3 | 6 | 18 | 12 |

| Employed | 30 | 60 | 29 | 58 | 40 | 80 | 99 | 66 |

| Self-employed | 9 | 18 | 7 | 14 | 5 | 10 | 21 | 14 |

| Retired | 0 | 0 | 2 | 4 | 0 | 0 | 2 | 1 |

| Previous psychological treatment | 17 | 34 | 18 | 36 | 24 | 48 | 59 | 39 |

| Previous psychotropic medication | 6 | 12 | 13 | 26 | 11 | 22 | 30 | 20 |

Note. N = 150 ( n = 50 for each condition). Participants were on average 39.5 years old ( SD = 10.1), and participant age did not differ by condition.

a Reflects the number and percentage of participants answering “yes” to this question.

Results of Curve-Fitting Analysis Examining the Time Course of Fixations to the Target

| Logistic parameter | 9-year-olds | 16-year-olds | (40) |

| Cohen's | ||

| Maximum asymptote, proportion | .843 | .135 | .877 | .082 | 0.951 | .347 | 0.302 |

| Crossover, in ms | 759 | 87 | 694 | 42 | 2.877 | .006 | 0.840 |

| Slope, as change in proportion per ms | .001 | .0002 | .002 | .0002 | 2.635 | .012 | 2.078 |

Note. For each subject, the logistic function was fit to target fixations separately. The maximum asymptote is the asymptotic degree of looking at the end of the time course of fixations. The crossover point is the point in time the function crosses the midway point between peak and baseline. The slope represents the rate of change in the function measured at the crossover. Mean parameter values for each of the analyses are shown for the 9-year-olds ( n = 24) and 16-year-olds ( n = 18), as well as the results of t tests (assuming unequal variance) comparing the parameter estimates between the two ages.

Descriptive Statistics and Correlations for Study Variables

| Variable |

|

| 1 | 2 | 3 | 4 | 5 | 6 | 7 | |

| 1. Internal– external status | 3,697 | 0.43 | 0.49 | — | ||||||

| 2. Manager job performance | 2,134 | 3.14 | 0.62 | −.08 | — | |||||

| 3. Starting salary | 3,697 | 1.01 | 0.27 | .45 | −.01 | — | ||||

| 4. Subsequent promotion | 3,697 | 0.33 | 0.47 | .08 | .07 | .04 | — | |||

| 5. Organizational tenure | 3,697 | 6.45 | 6.62 | −.29 | .09 | .01 | .09 | — | ||

| 6. Unit service performance | 3,505 | 85.00 | 6.98 | −.25 | −.39 | .24 | .08 | .01 | — | |

| 7. Unit financial performance | 694 | 42.61 | 5.86 | .00 | −.03 | .12 | −.07 | −.02 | .16 | — |

Means, Standard Deviations, and One-Way Analyses of Variance in Psychological and Social Resources and Cognitive Appraisals

| Measure | Urban | Rural | (1, 294) | η | ||

| Self-esteem | 2.91 | 0.49 | 3.35 | 0.35 | 68.87 | .19 |

| Social support | 4.22 | 1.50 | 5.56 | 1.20 | 62.60 | .17 |

| Cognitive appraisals | ||||||

| Threat | 2.78 | 0.87 | 1.99 | 0.88 | 56.35 | .20 |

| Challenge | 2.48 | 0.88 | 2.83 | 1.20 | 7.87 | .03 |

| Self-efficacy | 2.65 | 0.79 | 3.53 | 0.92 | 56.35 | .16 |

*** p < .001.

Results From a Factor Analysis of the Parental Care and Tenderness (PCAT) Questionnaire

| PCAT item | Factor loading | ||

| 1 | 2 | 3 | |

| Factor 1: Tenderness—Positive | |||

| 20. You make a baby laugh over and over again by making silly faces. | .04 | .01 | |

| 22. A child blows you kisses to say goodbye. | −.02 | −.01 | |

| 16. A newborn baby curls its hand around your finger. | −.06 | .00 | |

| 19. You watch as a toddler takes their first step and tumbles gently back down. | .05 | −.07 | |

| 25. You see a father tossing his giggling baby up into the air as a game. | .10 | −.03 | |

| Factor 2: Liking | |||

| 5. I think that kids are annoying (R) | −.01 | .06 | |

| 8. I can’t stand how children whine all the time (R) | −.12 | −.03 | |

| 2. When I hear a child crying, my first thought is “shut up!” (R) | .04 | .01 | |

| 11. I don’t like to be around babies. (R) | .11 | −.01 | |

| 14. If I could, I would hire a nanny to take care of my children. (R) | .08 | −.02 | |

| Factor 3: Protection | |||

| 7. I would hurt anyone who was a threat to a child. | −.13 | −.02 | |

| 12. I would show no mercy to someone who was a danger to a child. | .00 | −.05 | |

| 15. I would use any means necessary to protect a child, even if I had to hurt others. | .06 | .08 | |

| 4. I would feel compelled to punish anyone who tried to harm a child. | .07 | .03 | |

| 9. I would sooner go to bed hungry than let a child go without food. | .46 | −.03 | |

Note. N = 307. The extraction method was principal axis factoring with an oblique (Promax with Kaiser Normalization) rotation. Factor loadings above .30 are in bold. Reverse-scored items are denoted with an (R). Adapted from “Individual Differences in Activation of the Parental Care Motivational System: Assessment, Prediction, and Implications,” by E. E. Buckels, A. T. Beall, M. K. Hofer, E. Y. Lin, Z. Zhou, and M. Schaller, 2015, Journal of Personality and Social Psychology , 108 (3), p. 501 ( https://doi.org/10.1037/pspp0000023 ). Copyright 2015 by the American Psychological Association.

Moderator Analysis: Types of Measurement and Study Year

| Effect | Estimate |

| 95% CI | ||

| Fixed effects | |||||

| Intercept | .119 | .040 | .041 | .198 | .003 |

| Creativity measurement | .097 | .028 | .042 | .153 | .001 |

| Academic achievement measurement | −.039 | .018 | −.074 | −.004 | .03 |

| Study year | .0002 | .001 | −.001 | .002 | .76 |

| Goal | −.003 | .029 | −.060 | .054 | .91 |

| Published | .054 | .030 | −.005 | .114 | .07 |

| Random effects | |||||

| Within-study variance | .009 | .001 | .008 | .011 | <.001 |

| Between-study variance | .018 | .003 | .012 | .023 | <.001 |

Note . Number of studies = 120, number of effects = 782, total N = 52,578. CI = confidence interval; LL = lower limit; UL = upper limit.

Master Narrative Voices: Struggle and Success and Emancipation

| Discourse and dimension | Example quote |

| Struggle and success | |

| Self-actualization as member of a larger gay community is the end goal of healthy sexual identity development, or “coming out” | “My path of gayness ... going from denial to saying, well this is it, and then the process of coming out, and the process of just sort of, looking around and seeing, well where do I stand in the world, and sort of having, uh, political feelings.” (Carl, age 50) |

| Maintaining healthy sexual identity entails vigilance against internalization of societal discrimination | “When I'm like thinking of criticisms of more mainstream gay culture, I try to ... make sure it's coming from an appropriate place and not like a place of self-loathing.” (Patrick, age 20) |

| Emancipation | |

| Open exploration of an individually fluid sexual self is the goal of healthy sexual identity development | “[For heterosexuals] the man penetrates the female, whereas with gay people, I feel like there is this potential for really playing around with that model a lot, you know, and just experimenting and exploring.” (Orion, age 31) |

| Questioning discrete, monolithic categories of sexual identity | “LGBTQI, you know, and added on so many letters. Um, and it does start to raise the question about what the terms mean and whether ... any term can adequately be descriptive.” (Bill, age 50) |

Integrated Results Matrix for the Effect of Topic Familiarity on Reliance on Author Expertise

| Quantitative results | Qualitative results | Example quote |

| When the topic was more familiar (climate change) and cards were more relevant, participants placed less value on author expertise. | When an assertion was considered to be more familiar and considered to be general knowledge, participants perceived less need to rely on author expertise. | Participant 144: “I feel that I know more about climate and there are several things on the climate cards that are obvious, and that if I sort of know it already, then the source is not so critical ... whereas with nuclear energy, I don't know so much so then I'm maybe more interested in who says what.” |

| When the topic was less familiar (nuclear power) and cards were more relevant, participants placed more value on authors with higher expertise. | When an assertion was considered to be less familiar and not general knowledge, participants perceived more need to rely on author expertise. | Participant 3: “[Nuclear power], which I know much, much less about, I would back up my arguments more with what I trust from the professors.” |

Note . We integrated quantitative data (whether students selected a card about nuclear power or about climate change) and qualitative data (interviews with students) to provide a more comprehensive description of students’ card selections between the two topics.

Have a language expert improve your writing