An official website of the United States government

Official websites use .gov A .gov website belongs to an official government organization in the United States.

Secure .gov websites use HTTPS A lock ( Lock Locked padlock icon ) or https:// means you've safely connected to the .gov website. Share sensitive information only on official, secure websites.

- Publications

- Account settings

- Advanced Search

- Journal List

An overview of methodological approaches in systematic reviews

Prabhakar veginadu, hanny calache, akshaya pandian, mohd masood.

- Author information

- Article notes

- Copyright and License information

Correspondence , Dr. Prabhakar Veginadu, Department of Rural Clinical Sciences, La Trobe University, PO Box 199, Bendigo, Victoria 3552, Australia. Email: [email protected]

Corresponding author.

Received 2021 Aug 8; Accepted 2022 Mar 18; Issue date 2022 Mar.

This is an open access article under the terms of the http://creativecommons.org/licenses/by/4.0/ License, which permits use, distribution and reproduction in any medium, provided the original work is properly cited and is not used for commercial purposes.

The aim of this overview is to identify and collate evidence from existing published systematic review (SR) articles evaluating various methodological approaches used at each stage of an SR.

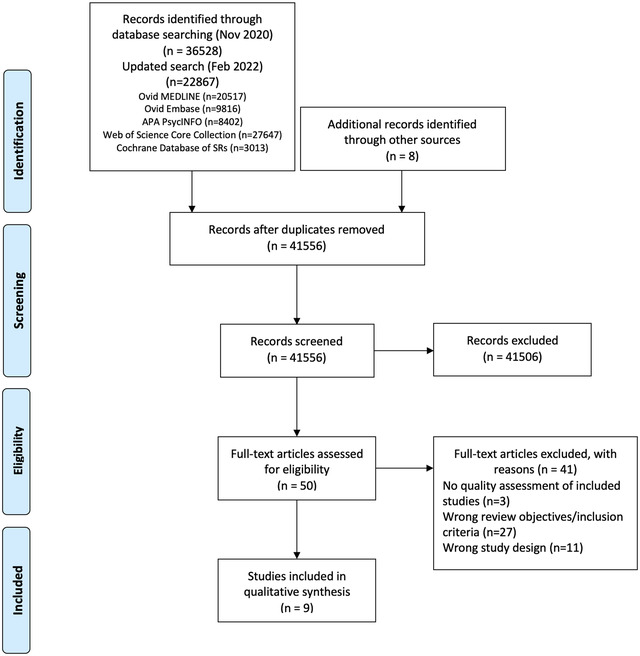

The search was conducted in five electronic databases from inception to November 2020 and updated in February 2022: MEDLINE, Embase, Web of Science Core Collection, Cochrane Database of Systematic Reviews, and APA PsycINFO. Title and abstract screening were performed in two stages by one reviewer, supported by a second reviewer. Full‐text screening, data extraction, and quality appraisal were performed by two reviewers independently. The quality of the included SRs was assessed using the AMSTAR 2 checklist.

The search retrieved 41,556 unique citations, of which 9 SRs were deemed eligible for inclusion in final synthesis. Included SRs evaluated 24 unique methodological approaches used for defining the review scope and eligibility, literature search, screening, data extraction, and quality appraisal in the SR process. Limited evidence supports the following (a) searching multiple resources (electronic databases, handsearching, and reference lists) to identify relevant literature; (b) excluding non‐English, gray, and unpublished literature, and (c) use of text‐mining approaches during title and abstract screening.

The overview identified limited SR‐level evidence on various methodological approaches currently employed during five of the seven fundamental steps in the SR process, as well as some methodological modifications currently used in expedited SRs. Overall, findings of this overview highlight the dearth of published SRs focused on SR methodologies and this warrants future work in this area.

Keywords: knowledge synthesis, methodology, overview, systematic reviews

1. INTRODUCTION

Evidence synthesis is a prerequisite for knowledge translation. 1 A well conducted systematic review (SR), often in conjunction with meta‐analyses (MA) when appropriate, is considered the “gold standard” of methods for synthesizing evidence related to a topic of interest. 2 The central strength of an SR is the transparency of the methods used to systematically search, appraise, and synthesize the available evidence. 3 Several guidelines, developed by various organizations, are available for the conduct of an SR; 4 , 5 , 6 , 7 among these, Cochrane is considered a pioneer in developing rigorous and highly structured methodology for the conduct of SRs. 8 The guidelines developed by these organizations outline seven fundamental steps required in SR process: defining the scope of the review and eligibility criteria, literature searching and retrieval, selecting eligible studies, extracting relevant data, assessing risk of bias (RoB) in included studies, synthesizing results, and assessing certainty of evidence (CoE) and presenting findings. 4 , 5 , 6 , 7

The methodological rigor involved in an SR can require a significant amount of time and resource, which may not always be available. 9 As a result, there has been a proliferation of modifications made to the traditional SR process, such as refining, shortening, bypassing, or omitting one or more steps, 10 , 11 for example, limits on the number and type of databases searched, limits on publication date, language, and types of studies included, and limiting to one reviewer for screening and selection of studies, as opposed to two or more reviewers. 10 , 11 These methodological modifications are made to accommodate the needs of and resource constraints of the reviewers and stakeholders (e.g., organizations, policymakers, health care professionals, and other knowledge users). While such modifications are considered time and resource efficient, they may introduce bias in the review process reducing their usefulness. 5

Substantial research has been conducted examining various approaches used in the standardized SR methodology and their impact on the validity of SR results. There are a number of published reviews examining the approaches or modifications corresponding to single 12 , 13 or multiple steps 14 involved in an SR. However, there is yet to be a comprehensive summary of the SR‐level evidence for all the seven fundamental steps in an SR. Such a holistic evidence synthesis will provide an empirical basis to confirm the validity of current accepted practices in the conduct of SRs. Furthermore, sometimes there is a balance that needs to be achieved between the resource availability and the need to synthesize the evidence in the best way possible, given the constraints. This evidence base will also inform the choice of modifications to be made to the SR methods, as well as the potential impact of these modifications on the SR results. An overview is considered the choice of approach for summarizing existing evidence on a broad topic, directing the reader to evidence, or highlighting the gaps in evidence, where the evidence is derived exclusively from SRs. 15 Therefore, for this review, an overview approach was used to (a) identify and collate evidence from existing published SR articles evaluating various methodological approaches employed in each of the seven fundamental steps of an SR and (b) highlight both the gaps in the current research and the potential areas for future research on the methods employed in SRs.

An a priori protocol was developed for this overview but was not registered with the International Prospective Register of Systematic Reviews (PROSPERO), as the review was primarily methodological in nature and did not meet PROSPERO eligibility criteria for registration. The protocol is available from the corresponding author upon reasonable request. This overview was conducted based on the guidelines for the conduct of overviews as outlined in The Cochrane Handbook. 15 Reporting followed the Preferred Reporting Items for Systematic reviews and Meta‐analyses (PRISMA) statement. 3

2.1. Eligibility criteria

Only published SRs, with or without associated MA, were included in this overview. We adopted the defining characteristics of SRs from The Cochrane Handbook. 5 According to The Cochrane Handbook, a review was considered systematic if it satisfied the following criteria: (a) clearly states the objectives and eligibility criteria for study inclusion; (b) provides reproducible methodology; (c) includes a systematic search to identify all eligible studies; (d) reports assessment of validity of findings of included studies (e.g., RoB assessment of the included studies); (e) systematically presents all the characteristics or findings of the included studies. 5 Reviews that did not meet all of the above criteria were not considered a SR for this study and were excluded. MA‐only articles were included if it was mentioned that the MA was based on an SR.

SRs and/or MA of primary studies evaluating methodological approaches used in defining review scope and study eligibility, literature search, study selection, data extraction, RoB assessment, data synthesis, and CoE assessment and reporting were included. The methodological approaches examined in these SRs and/or MA can also be related to the substeps or elements of these steps; for example, applying limits on date or type of publication are the elements of literature search. Included SRs examined or compared various aspects of a method or methods, and the associated factors, including but not limited to: precision or effectiveness; accuracy or reliability; impact on the SR and/or MA results; reproducibility of an SR steps or bias occurred; time and/or resource efficiency. SRs assessing the methodological quality of SRs (e.g., adherence to reporting guidelines), evaluating techniques for building search strategies or the use of specific database filters (e.g., use of Boolean operators or search filters for randomized controlled trials), examining various tools used for RoB or CoE assessment (e.g., ROBINS vs. Cochrane RoB tool), or evaluating statistical techniques used in meta‐analyses were excluded. 14

2.2. Search

The search for published SRs was performed on the following scientific databases initially from inception to third week of November 2020 and updated in the last week of February 2022: MEDLINE (via Ovid), Embase (via Ovid), Web of Science Core Collection, Cochrane Database of Systematic Reviews, and American Psychological Association (APA) PsycINFO. Search was restricted to English language publications. Following the objectives of this study, study design filters within databases were used to restrict the search to SRs and MA, where available. The reference lists of included SRs were also searched for potentially relevant publications.

The search terms included keywords, truncations, and subject headings for the key concepts in the review question: SRs and/or MA, methods, and evaluation. Some of the terms were adopted from the search strategy used in a previous review by Robson et al., which reviewed primary studies on methodological approaches used in study selection, data extraction, and quality appraisal steps of SR process. 14 Individual search strategies were developed for respective databases by combining the search terms using appropriate proximity and Boolean operators, along with the related subject headings in order to identify SRs and/or MA. 16 , 17 A senior librarian was consulted in the design of the search terms and strategy. Appendix A presents the detailed search strategies for all five databases.

2.3. Study selection and data extraction

Title and abstract screening of references were performed in three steps. First, one reviewer (PV) screened all the titles and excluded obviously irrelevant citations, for example, articles on topics not related to SRs, non‐SR publications (such as randomized controlled trials, observational studies, scoping reviews, etc.). Next, from the remaining citations, a random sample of 200 titles and abstracts were screened against the predefined eligibility criteria by two reviewers (PV and MM), independently, in duplicate. Discrepancies were discussed and resolved by consensus. This step ensured that the responses of the two reviewers were calibrated for consistency in the application of the eligibility criteria in the screening process. Finally, all the remaining titles and abstracts were reviewed by a single “calibrated” reviewer (PV) to identify potential full‐text records. Full‐text screening was performed by at least two authors independently (PV screened all the records, and duplicate assessment was conducted by MM, HC, or MG), with discrepancies resolved via discussions or by consulting a third reviewer.

Data related to review characteristics, results, key findings, and conclusions were extracted by at least two reviewers independently (PV performed data extraction for all the reviews and duplicate extraction was performed by AP, HC, or MG).

2.4. Quality assessment of included reviews

The quality assessment of the included SRs was performed using the AMSTAR 2 (A MeaSurement Tool to Assess systematic Reviews). The tool consists of a 16‐item checklist addressing critical and noncritical domains. 18 For the purpose of this study, the domain related to MA was reclassified from critical to noncritical, as SRs with and without MA were included. The other six critical domains were used according to the tool guidelines. 18 Two reviewers (PV and AP) independently responded to each of the 16 items in the checklist with either “yes,” “partial yes,” or “no.” Based on the interpretations of the critical and noncritical domains, the overall quality of the review was rated as high, moderate, low, or critically low. 18 Disagreements were resolved through discussion or by consulting a third reviewer.

2.5. Data synthesis

To provide an understandable summary of existing evidence syntheses, characteristics of the methods evaluated in the included SRs were examined and key findings were categorized and presented based on the corresponding step in the SR process. The categories of key elements within each step were discussed and agreed by the authors. Results of the included reviews were tabulated and summarized descriptively, along with a discussion on any overlap in the primary studies. 15 No quantitative analyses of the data were performed.

From 41,556 unique citations identified through literature search, 50 full‐text records were reviewed, and nine systematic reviews 14 , 19 , 20 , 21 , 22 , 23 , 24 , 25 , 26 were deemed eligible for inclusion. The flow of studies through the screening process is presented in Figure 1 . A list of excluded studies with reasons can be found in Appendix B .

Study selection flowchart

3.1. Characteristics of included reviews

Table 1 summarizes the characteristics of included SRs. The majority of the included reviews (six of nine) were published after 2010. 14 , 22 , 23 , 24 , 25 , 26 Four of the nine included SRs were Cochrane reviews. 20 , 21 , 22 , 23 The number of databases searched in the reviews ranged from 2 to 14, 2 reviews searched gray literature sources, 24 , 25 and 7 reviews included a supplementary search strategy to identify relevant literature. 14 , 19 , 20 , 21 , 22 , 23 , 26 Three of the included SRs (all Cochrane reviews) included an integrated MA. 20 , 21 , 23

Characteristics of included studies

SR = systematic review; MA = meta‐analysis; RCT = randomized controlled trial; CCT = controlled clinical trial; N/R = not reported.

The included SRs evaluated 24 unique methodological approaches (26 in total) used across five steps in the SR process; 8 SRs evaluated 6 approaches, 19 , 20 , 21 , 22 , 23 , 24 , 25 , 26 while 1 review evaluated 18 approaches. 14 Exclusion of gray or unpublished literature 21 , 26 and blinding of reviewers for RoB assessment 14 , 23 were evaluated in two reviews each. Included SRs evaluated methods used in five different steps in the SR process, including methods used in defining the scope of review ( n = 3), literature search ( n = 3), study selection ( n = 2), data extraction ( n = 1), and RoB assessment ( n = 2) (Table 2 ).

Summary of findings from review evaluating systematic review methods

Includes databases (MEDLINE, Embase, PyscINFO, CINAHL, Biosis, CancerLIT, Cabnar, CENTRAL, Chirolars, HealthStar, SciCitIndex, Cochrane Central Trial Register), internet, and handsearching.

Includes MEDLINE, Embase, PsychLIT, PsychINFO, Lilac and Cochrane Central Trials Register; HSS‐Highly Sensitive Search; SR, systematic review; MA, meta‐analysis; RCT, randomized controlled trial; RoB, risk of bias.

There was some overlap in the primary studies evaluated in the included SRs on the same topics: Schmucker et al. 26 and Hopewell et al. 21 ( n = 4), Hopewell et al. 20 and Crumley et al. 19 ( n = 30), and Robson et al. 14 and Morissette et al. 23 ( n = 4). There were no conflicting results between any of the identified SRs on the same topic.

3.2. Methodological quality of included reviews

Overall, the quality of the included reviews was assessed as moderate at best (Table 2 ). The most common critical weakness in the reviews was failure to provide justification for excluding individual studies (four reviews). Detailed quality assessment is provided in Appendix C .

3.3. Evidence on systematic review methods

3.3.1. methods for defining review scope and eligibility.

Two SRs investigated the effect of excluding data obtained from gray or unpublished sources on the pooled effect estimates of MA. 21 , 26 Hopewell et al. 21 reviewed five studies that compared the impact of gray literature on the results of a cohort of MA of RCTs in health care interventions. Gray literature was defined as information published in “print or electronic sources not controlled by commercial or academic publishers.” Findings showed an overall greater treatment effect for published trials than trials reported in gray literature. In a more recent review, Schmucker et al. 26 addressed similar objectives, by investigating gray and unpublished data in medicine. In addition to gray literature, defined similar to the previous review by Hopewell et al., the authors also evaluated unpublished data—defined as “supplemental unpublished data related to published trials, data obtained from the Food and Drug Administration or other regulatory websites or postmarketing analyses hidden from the public.” The review found that in majority of the MA, excluding gray literature had little or no effect on the pooled effect estimates. The evidence was limited to conclude if the data from gray and unpublished literature had an impact on the conclusions of MA. 26

Morrison et al. 24 examined five studies measuring the effect of excluding non‐English language RCTs on the summary treatment effects of SR‐based MA in various fields of conventional medicine. Although none of the included studies reported major difference in the treatment effect estimates between English only and non‐English inclusive MA, the review found inconsistent evidence regarding the methodological and reporting quality of English and non‐English trials. 24 As such, there might be a risk of introducing “language bias” when excluding non‐English language RCTs. The authors also noted that the numbers of non‐English trials vary across medical specialties, as does the impact of these trials on MA results. Based on these findings, Morrison et al. 24 conclude that literature searches must include non‐English studies when resources and time are available to minimize the risk of introducing “language bias.”

3.3.2. Methods for searching studies

Crumley et al. 19 analyzed recall (also referred to as “sensitivity” by some researchers; defined as “percentage of relevant studies identified by the search”) and precision (defined as “percentage of studies identified by the search that were relevant”) when searching a single resource to identify randomized controlled trials and controlled clinical trials, as opposed to searching multiple resources. The studies included in their review frequently compared a MEDLINE only search with the search involving a combination of other resources. The review found low median recall estimates (median values between 24% and 92%) and very low median precisions (median values between 0% and 49%) for most of the electronic databases when searched singularly. 19 A between‐database comparison, based on the type of search strategy used, showed better recall and precision for complex and Cochrane Highly Sensitive search strategies (CHSSS). In conclusion, the authors emphasize that literature searches for trials in SRs must include multiple sources. 19

In an SR comparing handsearching and electronic database searching, Hopewell et al. 20 found that handsearching retrieved more relevant RCTs (retrieval rate of 92%−100%) than searching in a single electronic database (retrieval rates of 67% for PsycINFO/PsycLIT, 55% for MEDLINE, and 49% for Embase). The retrieval rates varied depending on the quality of handsearching, type of electronic search strategy used (e.g., simple, complex or CHSSS), and type of trial reports searched (e.g., full reports, conference abstracts, etc.). The authors concluded that handsearching was particularly important in identifying full trials published in nonindexed journals and in languages other than English, as well as those published as abstracts and letters. 20

The effectiveness of checking reference lists to retrieve additional relevant studies for an SR was investigated by Horsley et al. 22 The review reported that checking reference lists yielded 2.5%–40% more studies depending on the quality and comprehensiveness of the electronic search used. The authors conclude that there is some evidence, although from poor quality studies, to support use of checking reference lists to supplement database searching. 22

3.3.3. Methods for selecting studies

Three approaches relevant to reviewer characteristics, including number, experience, and blinding of reviewers involved in the screening process were highlighted in an SR by Robson et al. 14 Based on the retrieved evidence, the authors recommended that two independent, experienced, and unblinded reviewers be involved in study selection. 14 A modified approach has also been suggested by the review authors, where one reviewer screens and the other reviewer verifies the list of excluded studies, when the resources are limited. It should be noted however this suggestion is likely based on the authors’ opinion, as there was no evidence related to this from the studies included in the review.

Robson et al. 14 also reported two methods describing the use of technology for screening studies: use of Google Translate for translating languages (for example, German language articles to English) to facilitate screening was considered a viable method, while using two computer monitors for screening did not increase the screening efficiency in SR. Title‐first screening was found to be more efficient than simultaneous screening of titles and abstracts, although the gain in time with the former method was lesser than the latter. Therefore, considering that the search results are routinely exported as titles and abstracts, Robson et al. 14 recommend screening titles and abstracts simultaneously. However, the authors note that these conclusions were based on very limited number (in most instances one study per method) of low‐quality studies. 14

3.3.4. Methods for data extraction

Robson et al. 14 examined three approaches for data extraction relevant to reviewer characteristics, including number, experience, and blinding of reviewers (similar to the study selection step). Although based on limited evidence from a small number of studies, the authors recommended use of two experienced and unblinded reviewers for data extraction. The experience of the reviewers was suggested to be especially important when extracting continuous outcomes (or quantitative) data. However, when the resources are limited, data extraction by one reviewer and a verification of the outcomes data by a second reviewer was recommended.

As for the methods involving use of technology, Robson et al. 14 identified limited evidence on the use of two monitors to improve the data extraction efficiency and computer‐assisted programs for graphical data extraction. However, use of Google Translate for data extraction in non‐English articles was not considered to be viable. 14 In the same review, Robson et al. 14 identified evidence supporting contacting authors for obtaining additional relevant data.

3.3.5. Methods for RoB assessment

Two SRs examined the impact of blinding of reviewers for RoB assessments. 14 , 23 Morissette et al. 23 investigated the mean differences between the blinded and unblinded RoB assessment scores and found inconsistent differences among the included studies providing no definitive conclusions. Similar conclusions were drawn in a more recent review by Robson et al., 14 which included four studies on reviewer blinding for RoB assessment that completely overlapped with Morissette et al. 23

Use of experienced reviewers and provision of additional guidance for RoB assessment were examined by Robson et al. 14 The review concluded that providing intensive training and guidance on assessing studies reporting insufficient data to the reviewers improves RoB assessments. 14 Obtaining additional data related to quality assessment by contacting study authors was also found to help the RoB assessments, although based on limited evidence. When assessing the qualitative or mixed method reviews, Robson et al. 14 recommends the use of a structured RoB tool as opposed to an unstructured tool. No SRs were identified on data synthesis and CoE assessment and reporting steps.

4. DISCUSSION

4.1. summary of findings.

Nine SRs examining 24 unique methods used across five steps in the SR process were identified in this overview. The collective evidence supports some current traditional and modified SR practices, while challenging other approaches. However, the quality of the included reviews was assessed to be moderate at best and in the majority of the included SRs, evidence related to the evaluated methods was obtained from very limited numbers of primary studies. As such, the interpretations from these SRs should be made cautiously.

The evidence gathered from the included SRs corroborate a few current SR approaches. 5 For example, it is important to search multiple resources for identifying relevant trials (RCTs and/or CCTs). The resources must include a combination of electronic database searching, handsearching, and reference lists of retrieved articles. 5 However, no SRs have been identified that evaluated the impact of the number of electronic databases searched. A recent study by Halladay et al. 27 found that articles on therapeutic intervention, retrieved by searching databases other than PubMed (including Embase), contributed only a small amount of information to the MA and also had a minimal impact on the MA results. The authors concluded that when the resources are limited and when large number of studies are expected to be retrieved for the SR or MA, PubMed‐only search can yield reliable results. 27

Findings from the included SRs also reiterate some methodological modifications currently employed to “expedite” the SR process. 10 , 11 For example, excluding non‐English language trials and gray/unpublished trials from MA have been shown to have minimal or no impact on the results of MA. 24 , 26 However, the efficiency of these SR methods, in terms of time and the resources used, have not been evaluated in the included SRs. 24 , 26 Of the SRs included, only two have focused on the aspect of efficiency 14 , 25 ; O'Mara‐Eves et al. 25 report some evidence to support the use of text‐mining approaches for title and abstract screening in order to increase the rate of screening. Moreover, only one included SR 14 considered primary studies that evaluated reliability (inter‐ or intra‐reviewer consistency) and accuracy (validity when compared against a “gold standard” method) of the SR methods. This can be attributed to the limited number of primary studies that evaluated these outcomes when evaluating the SR methods. 14 Lack of outcome measures related to reliability, accuracy, and efficiency precludes making definitive recommendations on the use of these methods/modifications. Future research studies must focus on these outcomes.

Some evaluated methods may be relevant to multiple steps; for example, exclusions based on publication status (gray/unpublished literature) and language of publication (non‐English language studies) can be outlined in the a priori eligibility criteria or can be incorporated as search limits in the search strategy. SRs included in this overview focused on the effect of study exclusions on pooled treatment effect estimates or MA conclusions. Excluding studies from the search results, after conducting a comprehensive search, based on different eligibility criteria may yield different results when compared to the results obtained when limiting the search itself. 28 Further studies are required to examine this aspect.

Although we acknowledge the lack of standardized quality assessment tools for methodological study designs, we adhered to the Cochrane criteria for identifying SRs in this overview. This was done to ensure consistency in the quality of the included evidence. As a result, we excluded three reviews that did not provide any form of discussion on the quality of the included studies. The methods investigated in these reviews concern supplementary search, 29 data extraction, 12 and screening. 13 However, methods reported in two of these three reviews, by Mathes et al. 12 and Waffenschmidt et al., 13 have also been examined in the SR by Robson et al., 14 which was included in this overview; in most instances (with the exception of one study included in Mathes et al. 12 and Waffenschmidt et al. 13 each), the studies examined in these excluded reviews overlapped with those in the SR by Robson et al. 14

One of the key gaps in the knowledge observed in this overview was the dearth of SRs on the methods used in the data synthesis component of SR. Narrative and quantitative syntheses are the two most commonly used approaches for synthesizing data in evidence synthesis. 5 There are some published studies on the proposed indications and implications of these two approaches. 30 , 31 These studies found that both data synthesis methods produced comparable results and have their own advantages, suggesting that the choice of the method must be based on the purpose of the review. 31 With increasing number of “expedited” SR approaches (so called “rapid reviews”) avoiding MA, 10 , 11 further research studies are warranted in this area to determine the impact of the type of data synthesis on the results of the SR.

4.2. Implications for future research

The findings of this overview highlight several areas of paucity in primary research and evidence synthesis on SR methods. First, no SRs were identified on methods used in two important components of the SR process, including data synthesis and CoE and reporting. As for the included SRs, a limited number of evaluation studies have been identified for several methods. This indicates that further research is required to corroborate many of the methods recommended in current SR guidelines. 4 , 5 , 6 , 7 Second, some SRs evaluated the impact of methods on the results of quantitative synthesis and MA conclusions. Future research studies must also focus on the interpretations of SR results. 28 , 32 Finally, most of the included SRs were conducted on specific topics related to the field of health care, limiting the generalizability of the findings to other areas. It is important that future research studies evaluating evidence syntheses broaden the objectives and include studies on different topics within the field of health care.

4.3. Strengths and limitations

To our knowledge, this is the first overview summarizing current evidence from SRs and MA on different methodological approaches used in several fundamental steps in SR conduct. The overview methodology followed well established guidelines and strict criteria defined for the inclusion of SRs.

There are several limitations related to the nature of the included reviews. Evidence for most of the methods investigated in the included reviews was derived from a limited number of primary studies. Also, the majority of the included SRs may be considered outdated as they were published (or last updated) more than 5 years ago 33 ; only three of the nine SRs have been published in the last 5 years. 14 , 25 , 26 Therefore, important and recent evidence related to these topics may not have been included. Substantial numbers of included SRs were conducted in the field of health, which may limit the generalizability of the findings. Some method evaluations in the included SRs focused on quantitative analyses components and MA conclusions only. As such, the applicability of these findings to SR more broadly is still unclear. 28 Considering the methodological nature of our overview, limiting the inclusion of SRs according to the Cochrane criteria might have resulted in missing some relevant evidence from those reviews without a quality assessment component. 12 , 13 , 29 Although the included SRs performed some form of quality appraisal of the included studies, most of them did not use a standardized RoB tool, which may impact the confidence in their conclusions. Due to the type of outcome measures used for the method evaluations in the primary studies and the included SRs, some of the identified methods have not been validated against a reference standard.

Some limitations in the overview process must be noted. While our literature search was exhaustive covering five bibliographic databases and supplementary search of reference lists, no gray sources or other evidence resources were searched. Also, the search was primarily conducted in health databases, which might have resulted in missing SRs published in other fields. Moreover, only English language SRs were included for feasibility. As the literature search retrieved large number of citations (i.e., 41,556), the title and abstract screening was performed by a single reviewer, calibrated for consistency in the screening process by another reviewer, owing to time and resource limitations. These might have potentially resulted in some errors when retrieving and selecting relevant SRs. The SR methods were grouped based on key elements of each recommended SR step, as agreed by the authors. This categorization pertains to the identified set of methods and should be considered subjective.

5. CONCLUSIONS

This overview identified limited SR‐level evidence on various methodological approaches currently employed during five of the seven fundamental steps in the SR process. Limited evidence was also identified on some methodological modifications currently used to expedite the SR process. Overall, findings highlight the dearth of SRs on SR methodologies, warranting further work to confirm several current recommendations on conventional and expedited SR processes.

CONFLICT OF INTEREST

The authors declare no conflicts of interest.

Supporting information

APPENDIX A: Detailed search strategies

APPENDIX B: List of excluded studies with detailed reasons for exclusion

APPENDIX C: Quality assessment of included reviews using AMSTAR 2

ACKNOWLEDGMENTS

The first author is supported by a La Trobe University Full Fee Research Scholarship and a Graduate Research Scholarship.

Open Access Funding provided by La Trobe University.

Veginadu P, Calache H, Gussy M, Pandian A, Masood M. An overview of methodological approaches in systematic reviews. J Evid Based Med. 2022;15:39–54. 10.1111/jebm.12468

- 1. Ioannidis JPA. Evolution and translation of research findings: from bench to where. PLoS Clin Trials. 2006;1(7), e36. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 2. Crocetti E. Systematic reviews with meta‐analysis:why, when, and how? Emerg Adulthood. 2016;4(1):3–18. [ Google Scholar ]

- 3. Moher D, Liberati A, Tetzlaff J, Altman DG, The PG. Preferred reporting items for systematic reviews and meta‐analyses: the PRISMA statement. PLoS Med. 2009;6(7), e1000097. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 4. Akers J. Systematic Reviews: CRD's Guidance for Undertaking Reviews in Health Care. CRD, University of York; 2009. [ Google Scholar ]

- 5. Higgins JPT, Thomas J, Chandler J, et al., eds. Cochrane Handbook for Systematic Reviews of Interventions Version 6.3. Cochrane; 2022. http://www.training.cochrane.org/handbook . [updated February 2022]. Available from. [ Google Scholar ]

- 6. Joanna Briggs Institute . Joanna Briggs Institute Reviewers’ Manual: 2015 Edition/Supplement. The Joanna Briggs Institute; 2015. [ Google Scholar ]

- 7. Methods Group of the Campbell Collaboration . Methodological expectations of Campbell Collaboration intervention reviews: Conduct standards . 2016.

- 8. Chandler J, Hopewell S. Cochrane methods—twenty years experience in developing systematic review methods. Syst Rev. 2013;2(1):76. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 9. Tsertsvadze A, Chen Y‐F, Moher D, Sutcliffe P, McCarthy N. How to conduct systematic reviews more expeditiously? Syst Rev. 2015;4: 160–160. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 10. Ganann R, Ciliska D, Thomas H. Expediting systematic reviews: methods and implications of rapid reviews. Implement Sci. 2010;5: 56. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 11. Tricco AC, Antony J, Zarin W, et al. A scoping review of rapid review methods. BMC Med. 2015;13(1):224. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 12. Mathes T, Klasen P, Pieper D. Frequency of data extraction errors and methods to increase data extraction quality: a methodological review. BMC Med Res Methodol. 2017;17(1):152. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 13. Waffenschmidt S, Knelangen M, Sieben W, Buhn S, Pieper D. Single screening versus conventional double screening for study selection in systematic reviews: a methodological systematic review. BMC Med Res Methodol. 2019;19:132. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 14. Robson RC, Pham B, Hwee J, et al. Few studies exist examining methods for selecting studies, abstracting data, and appraising quality in a systematic review. J Clin Epidemiol. 2019;106: 121–135. [ DOI ] [ PubMed ] [ Google Scholar ]

- 15. Pollock M, Fernandes RM, Becker LA, Pieper D, Hartling L. Chapter V: overviews of reviews. In: Higgins JPT, Thomas J, Chandler J, et al., eds. Cochrane Handbook for Systematic Reviews of Interventions version 6.3 (updated February 2022). Cochrane; 2022. Available from: http://www.training.cochrane.org/handbook [ Google Scholar ]

- 16. Montori VM, Wilczynski NL, Morgan D, Haynes RB. Optimal search strategies for retrieving systematic reviews from Medline: analytical survey. BMJ. 2005;330(7482):68. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 17. Wilczynski NL, Haynes RB. EMBASE search strategies achieved high sensitivity and specificity for retrieving methodologically sound systematic reviews. J Clin Epidemiol. 2007;60(1):29–33. [ DOI ] [ PubMed ] [ Google Scholar ]

- 18. Shea BJ, Reeves BC, Wells G, et al. AMSTAR 2: a critical appraisal tool for systematic reviews that include randomised or non‐randomised studies of healthcare interventions, or both. BMJ. 2017;358: j4008. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 19. Crumley ET, Wiebe N, Cramer K, Klassen TP, Hartling L. Which resources should be used to identify RCT/CCTs for systematic reviews: a systematic review. BMC Med Res Methodol. 2005;5: 24. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 20. Hopewell S, Clarke MJ, Lefebvre C, Scherer RW. Handsearching versus electronic searching to identify reports of randomized trials. Cochrane Database Syst Rev. 2007;2007(2), MR000001. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 21. Hopewell S, McDonald S, Clarke M, Egger M. Grey literature in meta‐analyses of randomized trials of health care interventions. Cochrane Database Syst Rev. 2007(2), MR000010. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 22. Horsley T, Dingwall O, Sampson M. Checking reference lists to find additional studies for systematic reviews. Cochrane Database Syst Rev. 2011;2011(8), MR000026. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 23. Morissette K, Tricco AC, Horsley T, Chen MH, Moher D. Blinded versus unblinded assessments of risk of bias in studies included in a systematic review. Cochrane Database Syst Rev. 2011;2011(9), MR000025. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 24. Morrison A, Polisena J, Husereau D, et al. The effect of English‐language restriction on systematic review‐based meta‐analyses: a systematic review of empirical studies. Int J Technol Assess Health Care. 2012;28(2):138–144. [ DOI ] [ PubMed ] [ Google Scholar ]

- 25. O'Mara‐Eves A, Thomas J, McNaught J, Miwa M, Ananiadou S. Using text mining for study identification in systematic reviews: a systematic review of current approaches. Syst Rev. 2015;4(1):5. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 26. Schmucker CM, Blumle A, Schell LK, et al. Systematic review finds that study data not published in full text articles have unclear impact on meta‐analyses results in medical research. PLoS ONE [Electronic Resource]. 2017;12(4), e0176210. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 27. Halladay CW, Trikalinos TA, Schmid IT, Schmid CH, Dahabreh IJ. Using data sources beyond PubMed has a modest impact on the results of systematic reviews of therapeutic interventions. J Clin Epidemiol. 2015;68(9):1076–1084. [ DOI ] [ PubMed ] [ Google Scholar ]

- 28. Nussbaumer‐Streit B, Klerings I, Dobrescu A, et al. Excluding non‐English publications from evidence‐syntheses did not change conclusions: a meta‐epidemiological study. J Clin Epidemiol. 2020;118: 42–54. [ DOI ] [ PubMed ] [ Google Scholar ]

- 29. Cooper C, Booth A, Britten N, Garside R. A comparison of results of empirical studies of supplementary search techniques and recommendations in review methodology handbooks: a methodological review. Syst Rev. 2017;6(1):234. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 30. Melendez‐Torres GJ, O'Mara‐Eves A, Thomas J, Brunton G, Caird J, Petticrew M. Interpretive analysis of 85 systematic reviews suggests that narrative syntheses and meta‐analyses are incommensurate in argumentation. Res Synth Methods. 2017;8(1):109–118. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 31. Melendez‐Torres GJ, Thomas J, Lorenc T, O'Mara‐Eves A, Petticrew M. Just how plain are plain tobacco packs: re‐analysis of a systematic review using multilevel meta‐analysis suggests lessons about the comparative benefits of synthesis methods. Syst Rev. 2018;7(1):153. [ DOI ] [ PMC free article ] [ PubMed ] [ Google Scholar ]

- 32. Nussbaumer‐Streit B, Klerings I, Wagner G, et al. Abbreviated literature searches were viable alternatives to comprehensive searches: a meta‐epidemiological study. J Clin Epidemiol. 2018;102: 1–11. [ DOI ] [ PubMed ] [ Google Scholar ]

- 33. Shojania KG, Sampson M, Ansari MT, Ji J, Doucette S, Moher D. How quickly do systematic reviews go out of date? A survival analysis. Ann Intern Med. 2007;147(4):224–233. [ DOI ] [ PubMed ] [ Google Scholar ]

Associated Data

This section collects any data citations, data availability statements, or supplementary materials included in this article.

Supplementary Materials

- View on publisher site

- PDF (452.5 KB)

- Collections

Similar articles

Cited by other articles, links to ncbi databases.

- Download .nbib .nbib

- Format: AMA APA MLA NLM

Add to Collections

- Open access

- Published: 10 October 2023

Clinical systematic reviews – a brief overview

- Mayura Thilanka Iddagoda 1 , 2 &

- Leon Flicker 1 , 2

BMC Medical Research Methodology volume 23 , Article number: 226 ( 2023 ) Cite this article

3435 Accesses

7 Citations

2 Altmetric

Metrics details

Systematic reviews answer research questions through a defined methodology. It is a complex task and multiple articles need to be referred to acquire wide range of required knowledge to conduct a systematic review. The aim of this article is to bring the process into a single paper.

The statistical concepts and sequence of steps to conduct a systematic review or a meta-analysis are examined by authors.

The process of conducting a clinical systematic review is described in seven manageable steps in this article. Each step is explained with examples to understand the method evidently.

A complex process of conducting a systematic review is presented simply in a single article.

Peer Review reports

Systematic reviews are a structured approach to answer a research question based on all suitable available empirical evidence. The statistical methodology used to synthesize results in such a review is called ‘meta-analysis’. There are five types of clinical systematic reviews described in this article (see Fig. 1 ), including intervention, diagnostic test accuracy, prognostic, methodological and qualitative. This review will provide a very brief overview in a narrative fashion. This article does not cover systematic reviews of more epidemiologically based studies. The recommended process undertaken in a systematic review is described under seven steps in this paper [ 1 ].

Types of systematic reviews

There are resources for those who are moving from the beginning stage and gaining more expertise (See Table 1 ). Cochrane conducts online interactive master classes on systematic reviews throughout the year and there are web tutorials in the form of e-learning modules. Some groups in Cochrane commission limited number of systematic reviews and can be contacted directly for support ([email protected]). Some institutions have systematic review training programs including John Hopkins (Coursea), Joanna Briggs Institute (JBI education), Yale University (Search strategy), University of York (Centre for Reviews) and Mayo Clinic Libraries. BMC systematic reviews group also introduced “Peer review mentoring” program to support early researchers in systematic reviews. The local University/Hospital librarian is usually a good point of first reference for searches and is able to direct reviewers to other support.

Research question and study protocol

A clearly defined study question is vital and will direct the following steps in a systematic review. The question should have some novelty (e.g. there should be no existing review without new primary studies) and be of interest to the reviewers. Major conflicts of interest can be problematic (e.g. employment by a company that manufactures the intervention). Primary components of a research question should include inclusion criteria, search strategy, analysis or outcome measures and interpretation. Types of reviews will determine the categories of research questions such as intervention, prognostic, diagnostic, etc. [ 1 ].

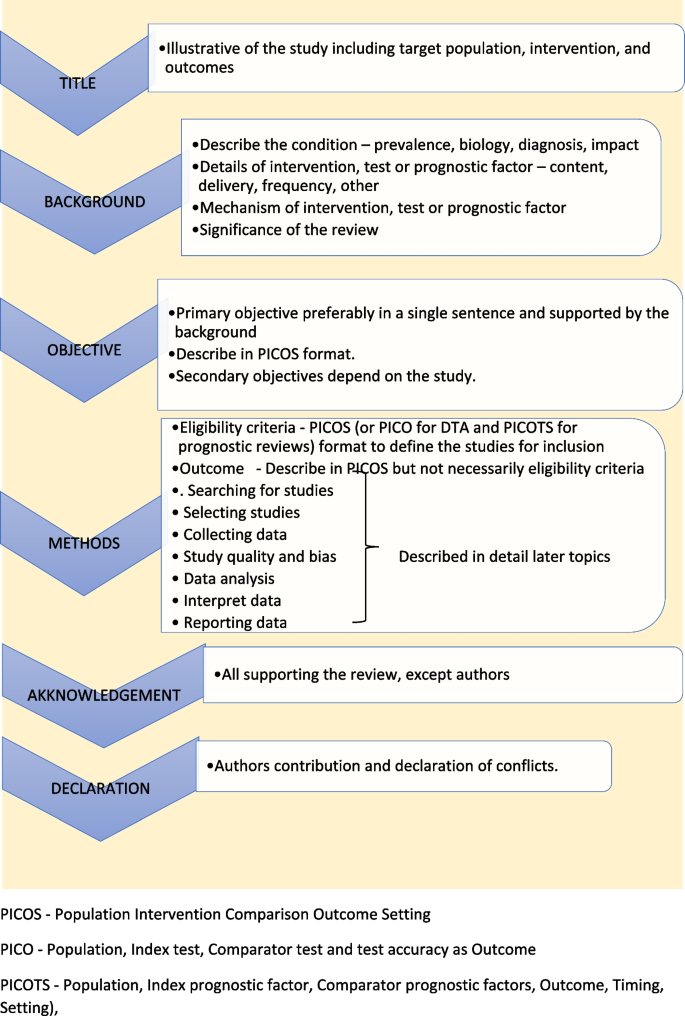

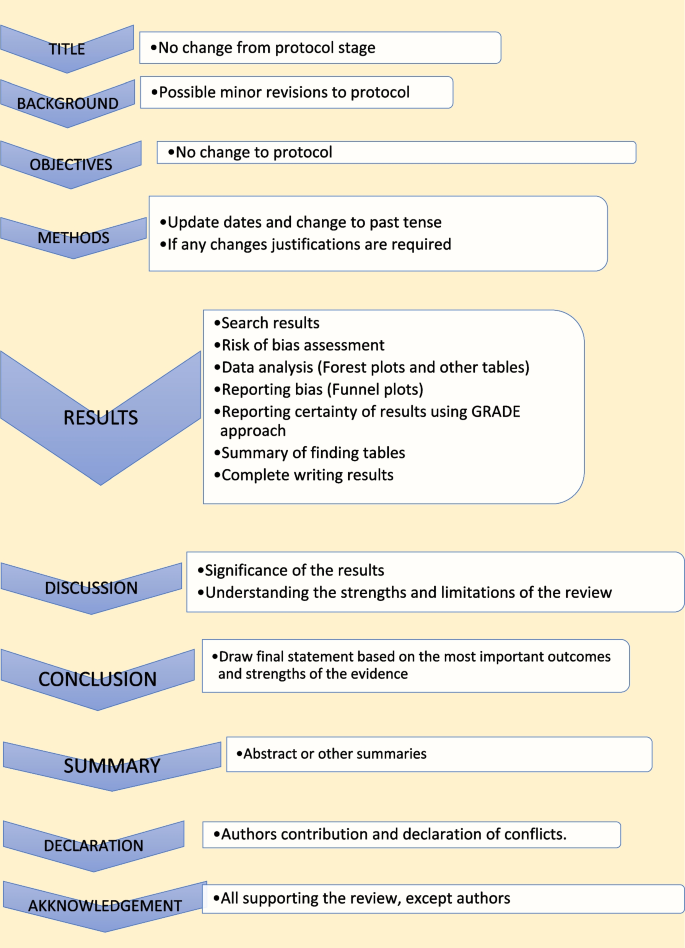

Study protocol elaborates the research question. The language of the study protocol is important. It is usually written in future tense, accessible language, active voice and full sentences [ 2 ]. Structure of the review protocol is described in Fig. 2 .

Structure of the review protocol

Searching studies

The comprehensive search for eligible studies is the most defining step in a systematic review. The guidance by an information specialist, or an experienced librarian, is a key requirement for designing a thorough search strategy [ 3 , 4 ].

The search strategy should explore multiple sources rigorously and it should be reproducible. It is important to balance sensitivity and precision in designing a search plan. A sensitive approach will provide a large number of studies, which lowers the risk of missing relevant studies but may produce a large workload. On the other hand, a focused search (precision) will give a more manageable number of studies but increases the risk of missing studies.

There are multiple sources to search for eligible studies in a systematic review or a meta-analysis. The key databases are Central (Cochrane register of clinical trials), MEDLINE (PubMed) and Embase. There are many other databases, published reviews and reference lists that may be used. Forward citation tracking can be done for searched studies using citation indices like Google Scholar, Scopus or Web of Science. There may be studies presented to different levels of governmental and non-governmental organizations which are not recognized as commercial publishers. These studies are called ‘grey literature’. Extensive investigations in different sources are required to identify grey literature. Information specialists are helpful in finding these studies [ 2 ].

Designing the search strategy requires a structured approach. Again, assistance from a librarian or an information specialist is recommended. PICOS, PICO and PICOTS elements are used to design key concepts. Participants and study design are relevant elements used in all reviews. Intervention reviews require specification of the intervention’s exact nature. Outcomes are important for both intervention and prognostic reviews.

Search terms are then developed using key concepts. There are two main search terms (text words and index terms). Text words or natural language terms appear in most publications. Different authors may use different text words for the same pathology. For an example, words such as injury, wound, trauma are used to describe physical damage to the body. Index terms, on the other hand, are controlled vocabularies defined by database indexers [ 4 ]. Common terms are MeSH (Medical Subject Headings) by MEDLINE and Emtree in Embase. The index terms do not change with the interface (eg. the term ‘wound and injuries’ is used for all types of damage to the body from external causes) [ 5 ].

Search filters are used to identify search terms. The choice of filters depends on the study design, database and interface. There are specific words used to combine search terms called ‘Boolean operators’. The main Boolean operators are ‘OR’ which broaden the search (accidents OR falls will include all studies with both terms) and ‘AND’ which narrow the search (accidents AND falls will select studies with both terms). In standard search strategy all terms within a key concept are combined with ‘OR’ and in-between concepts using ‘AND’.

Limits and restrictions are used in search strategy to improve precision. The common restrictions are language selections, publication date limits and format boundaries. These limits may result in missing relevant studies. It is good practice to explain the reason for restrictions in the search strategy. It is also important to be aware of errors and retractions in selected studies. Information specialists can add terms to remove such studies in the search process. The final step is piloting the search strategy. It will give an opportunity to adjust the search strategy for optimal sensitivity and precision [ 6 ].

All systematic reviews require consistent management of the search studies. It is challenging to manage a large number of studies manually. Reference management software can merge all search results, remove duplicates, record number of studies selected in each step, store methodology and selection criteria, and support exporting selected studies to analysis software. Specific platforms and software packages are extremely useful and can save time and effort in navigating the search and compiling the appropriate data. There are many software packages available for systematic review reference management, including Covidence, Abstracker, CADIMA, SUMARI and DistillerSR.

Throughout the search process, documentation is crucial. Search criteria and strategy, total number of studies in each step, searched databases and non-databases and copies of internet results are important records. In a situation where the search was more than 12 months old, it is advisable to re-run the search to minimize missing novel studies [ 2 , 6 ].

Selecting studies

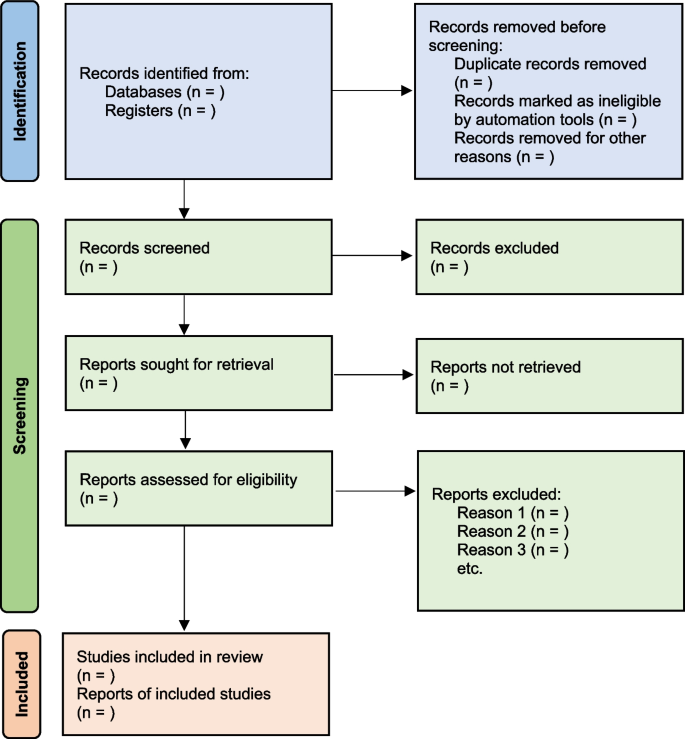

All the searched studies are selected for quantitative synthesis. Numbers of studies marked in each selection process needs to be documented. The PRISMA flow maps (Fig. 3 ) can be used to report the selection process [ 7 ].

PRISMA flow diagram map for systematic review study selection process

During the selection process, it is important to minimize bias. This can be achieved by measures such as having a pre-planned written review protocol with inclusion and exclusion criteria, adding study design as an inclusion criteria and independent study selection by at least 2 researchers. Items to consider in collecting data are source, eligibility, methods, outcomes, and results. Outcomes should be based on what is important to patients, not what researchers have decided to measure. Other items of interest are bibliographic information and references of other relevant studies. The most important decisions for the entire review are whether individual studies will be included or excluded for consideration in subsequent analyses. This may be the major determinant of the final composite results of the review. It is important to resolve any discrepancies in individual judgements by reviewers as objectively as possible, always remembering that individuals may be nature by “lumpers” or "splitters”. Ref (Darwin, Charles (1 August 1857). "Letter no. 2130". Darwin Correspondence Project).

Once the items to collect are decided, data extraction forms can be used to collect data for the review. The extraction form can be set up as paper, soft copy (word, excel or pdf format) or by using a database from specific software (eg: Covidence, EPPI-Reviewer, etc). All recordable outcome measures are collected for optimal analysis. It is nearly always a problem that some included studies may not provide usable data for extraction. These challenges are managed as shown in Table 2 .

It is important to be polite and clear when contacting authors. Imputing missing data carries a risk of error and it is best to get as much possible information from relevant authors. There are different data categories used to report outcomes in research studies. Table 3 summarizes common data types with some examples [ 2 ].

Study quality and bias

The results will not represent accurate evidence when there is bias in a study. These poor-quality studies introduce bias into a systematic review. Risk of bias is decreased, and the study’s quality improved by clearcut randomization, outcome data on all participants (i.e. complete follow-up) and blinding (for both participant and outcome assessor) [ 2 , 8 ].

The Cochrane Risk of bias tool (RoB) [ 9 ] can be used to assess risk of bias in Randomized Control Trials (RCTs). However, in Non-Randomized Studies of Interventions (NRSI), tools such as The Newcastle-Ottawa Scale [ 10 ], ROBINS-I [ 11 ], The DOWNS-Black [ 12 ] can be used to assess risk of bias. Please see bias domains in RCT and NRSI in Table 4 .

Blinding and masking can minimize the bias secondary to deviation from intended interventions. Missing outcome data or attrition due to various issues such as participant withdrawal, loss to follow up and lost data are also common causes for bias in studies. Researchers use imputation to address missing data which could lead to over or underestimation of intervention effects. Sensitivity analysis can be conducted to investigate the effect of such assumptions. Selective reporting is another problem, and it is difficult to identify and sources such as clinical trial registries or published trial protocols can be used to minimize such discrepancies.

Data analysis

Analysis of data is crucial in a systematic review and important aspect of this step are described below [ 2 , 13 ].

- Effect measure

Outcome data for each selected study will be in different measures. It is important to select a comparable effect measure for all studies for the particular outcome to facilitate synthesis of overall effect measure. Common effect measures for dichotomous outcomes are risk ratios (RR), odds ratios (OR) and risk differences (absolute risk reduction - ARR). These measures are selected for the analysis based on their consistency, mathematical properties, and communication effect For DTA reviews sensitivity and specificity are commonly used.

The mean difference (MD) is the commonest effect measure of continuous outcome data. When interpreting MD, report as many details such as the size of the difference, nature of the outcome (good or bad), characteristics of the scale for better understanding of the results. However, studies in the review may not use the same scales and standardization of results may be required. The standardized mean difference (SMD) can be calculated in such situations if the same concept or measures are used. The SMD is expressed in units of Standard Deviation (SD). It is important to correct the direction of the scale before combining them. All outcome data should be reported along with a measure of uncertainty such as confidence interval (CI).

There are endpoints and changes from baseline data in studies. Endpoint scores are usually reported in standard deviations (SD) and change from baseline data present in MD. Although it is possible to combine two types of data, SMD calculations are inaccurate in such situations. It is also good practice to conduct sensitivity analyses to assess the acceptability of the choices made.

Meta analysis

There are many advantages to performing a meta-analysis. It combines samples and provides more precise quantitative answers to the study objective. Study quality, comparability of data and data formats affect the output of the meta-analysis. The acceptable steps in meta-analysis are described in Table 5 .

- Heterogeneity

Variation across studies, more than expected by chance, is called heterogeneity. Although there are several types of heterogeneity such as clinical (variations in population and interventions), methodological (differences in designs and outcomes) and statistical (variable measure of effects), statistical heterogeneity is the most important type to discuss in meta-analysis [ 2 , 14 , 15 ].

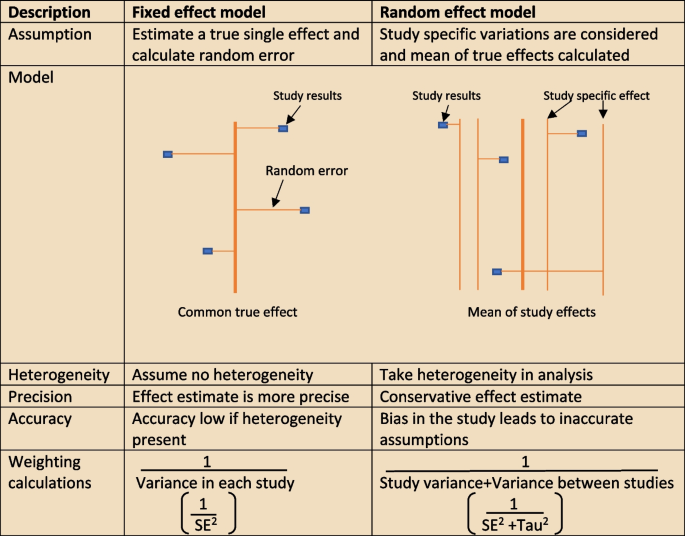

The heterogeneity assumptions affect data analysis. There are two models as described in Fig. 4 , used to assess heterogeneity. If the heterogeneity is minimal, then the Tau 2 is close to zero and weight estimates are similar from both methods. Tau is the standard deviation of true effect between studies and Tau 2 is the variance.

Heterogeneity assumption methods

There are a few tools to assess heterogeneity. These are Q test, I 2 statistics and visual inspection of forest plot. The easiest method is visual inspection of forest plot. Studies without overlap in confidence intervals are not homogenous. At the same time studies spread over null effect line, the heterogeneity is more relevant in analysis to guide the direction of the effect. The chi-squared or Q test believes all studies measure the same effect and a low p value suggests high heterogeneity. However, reliability of the Q test is low in extreme number of studies as the p value becomes less sensitive or too sensitive, thus under- or over-diagnosing heterogeneity respectively. The other tool to diagnose heterogeneity is I 2 statistic, which presents heterogeneity in a percentage value. Low values, below 30%, suggest minimal heterogeneity.

The next step is to deal with heterogeneity by exploring possible causes. Errors in data collection or analysis and true variations in population or intervention are common reasons for outlying results. These identified reasons should be presented cautiously in subgroup analysis. If no cause is identified, mention this in (GRADE approach– described later) the review as unexplained heterogeneity. In each subgroup, the heterogeneity and effect modification should be reported. It is also important to have a logical basis for each factor reported in the subgroup analysis, as too many factors may confuse readers. It is equally important to make sure there is meaningful clinical relevance in these subgroups.

Different study designs and missing data

Some studies may have more than one intervention. It is reasonable to ignore intervention arms of no interest in the review. But if all treatment arms need to be included, the control group could be divided uniformly amongst intervention arms, or all arms could be analyzed together or separately. The unit of analysis error is common in cluster randomized trial analysis, since clusters are considered as units. Similarly, correlation should be considered in crossover trials to minimize over or under weighting the study in analysis. There will be high risk of bias and heterogeneity in analyzing nonrandomized studies (NRS). However, normal effect measures can be used in relatively homogenous NRS meta-analysis.

Sometimes, missing statistics are found, and it is reasonable to calculate means and SDs from available data. Imputation of data should be done cautiously and reported in sensitive analysis.

Reporting and interpretation of results

It is important to report results in depth and not merely statistical values. The main measures used to report meta-analysis are Confidence interval (CI) and SMD [ 2 ].

The CI is the range where the true value probably sits. A narrow CI suggests more precise effects. The CI is usually presented as 95% interval (Corresponding to p value of 0.05) and rarely in 90% interval (P of 0.1). It is statistically significant when CI is away from the line of zero effect. However even statistically significant effects may not have clinical value if it does not meet minimally important change. On the other effects that are not statistically significant may still have clinical importance and raises question regarding the overall power of the meta-analysis to detect clinically important effects.

The SMD is defined above (“ Data analysis ” section) as an effect measure. The value more than zero means significant change of the intervention. However, interpretation of the size of significance is difficult in SMD as it reports units of standard deviation (SD). The Cohen’s rule of thumb (SMD <0.4 small effect, >0.7 large effect and moderate in between), transformation to OR (assuming equal SDs in both control and intervention arms) or calculating estimate MDs in a familiar scale are reasonable methods to report SMD results.

Reporting bias and certainty of evidence

The risk of missing information in a systematic review in the process from writing study protocol to publication is called reporting bias. Many factors such as author beliefs, word limitations, editorial and reviewers’ approvals can cause reporting bias. Funnel plots are a recommended statistical method to detect reporting bias in systematic reviews and meta-analysis.

Reporting the certainty of the results is another important step at the end of study analysis. The Grading of Recommendations, Assessment, Development and Evaluation (GRADE) is a recommended structured approach to report certainty of data. Table 6 describe topics used to rate up or down the certainty according to GRADE system [ 16 ]. Another important aspect of a systematic review is to categorize and present research studies based on the quality of the study.

The final rating of certainty in a meta-analysis is based on combination of all domains in each and overall studies. This information should be mentioned in the result section using numbers and explained in text in the discussion. The same system can be used in narrative synthesis of results in systematic reviews. It is important to remember rate up is only relevant for non-randomized studies and randomized studies starts with higher certainty.

Reporting the review

The last step of a systematic review or meta-analysis is report writing. Here, all parts are merged to write the review in structured format, using the protocol as the starting point. All systematic reviews should have a protocol to begin with as shown in Fig. 5 [ 2 ].

Structure for report writing

Summary of finding table

The ‘summary of finding’ table is a useful step in the writing. All the outcomes with a list of studies are recorded in this table. Then the relative / absolute effect (import from forest plots), certainty of evidence (based on GRADE) and comments are included in separate columns. Footnotes can be included for explanation of decisions. There are softwares to develop summary of tables, such as GRADEpro, which is compatible with RevMan [ 17 ].

Presenting results

The first paragraph of the results is the search process. The PRISMA flow (described in Fig. 1 ) is recommended to report the search summary [ 7 ]. The second section is the summary of risk of bias assessment for included studies. This will be only a narrative writing of significant differences, as individual study risk of bias will be presented in data tables in detail. Following this, review findings are presented in structured format.

The effects of interventions are presented in forest plots and data tables/figures. It is important to remember that this is not the section to interpret or infer results. All outcomes planned in the protocol should be reported, including the outcomes without evidence. Consistency of outcomes order should be maintained throughout the review. Present intervention vs no intervention before one vs other intervention. Primary outcomes are compared first, followed by secondary outcomes. Throughout the writing, check the reliability of results among plots, tables, figures, and texts. However, it may not be feasible to publish all plots and tables in the main document. Supplementary materials or appendices are available in journals for less important analyses.

There may be situations where selected studies are too diverse to conduct a meta-analysis. Narrative synthesis is an option in such situations to analyze results. It is easy to examine data by grouping studies in a narrative synthesis. Avoid vote counting of positive and negative studies in narrative reviews.

The first paragraph in the discussion should summarize the main (both positive and negative) findings along with certainty of evidence. The summary of the finding table can be used to identify the most important outcomes. Then describe whether the results address the study questions in the format of PICOS.

The quality of the review evidence is discussed afterwards. All domains of GRADE assessment including inconsistency, indirectness, imprecision, publication bias should be discussed in relation to the conclusions. Selection bias of studies can be included in the strengths/limitations section along with other assumptions made during the review. It is reasonable to mention agreements/disagreements with other reviews at the end in the context of past reviews.

The conclusion is the summary of review findings which guide readers to make decisions in policy making or clinical practice. It is important to mention both positive and negative salient results of the review in the conclusion. Make sure only your study findings are presented, and do not comment on outside sources. At the end of presenting results, recommendations can be mentioned to fill the gaps in evidence. The primary value of systematic reviews is to drive improvements in evidence-based practice, based on the needs of patients.

There are often other versions of the summaries from reviews presenting the major findings in plain language for the benefit of consumers and general public. It is advisable to use bullet points, and subheadings can be phrased as questions (What is the intervention? Whys it is important? What did we find? What are limitations? What is the conclusion?). It is better to write in first person active voice to directly address readers.

All types of summaries should provide consistent information to the main text. When describing uncertainty, be clear with the study limitations. As the summary is painting the study report, focus on the main results and quality of evidence.

Availability of data and materials

Not applicable.

Chandler J, Cumpston M, Thomas J, Higgins JP, Deeks JJ, Clarke MJ, Li T, Page MJ, Welch VA. Chapter 1: introduction. Cochrane Handbook Syst Rev Interv Ver. 2019;5(0):3–8.

Cumpston M, Li T, Page MJ, Chandler J, Welch VA, Higgins JP, Thomas J. Updated guidance for trusted systematic reviews: a new edition of the Cochrane Handbook for Systematic Reviews of Interventions. Cochrane Database Syst Rev. 2019;2019(10).

Mueller M, D’Addario M, Egger M, Cevallos M, Dekkers O, Mugglin C, Scott P. Methods to systematically review and meta-analyse observational studies: a systematic scoping review of recommendations. BMC Med Res Methodol. 2018;18(1):1–8.

Article Google Scholar

Chojecki D, Tjosvold L. Documenting and reporting the search process. HTAI Vortal [online]. 2020.

Sorden N. New MeSH Browser Available. NLM Tech Bull. 2016;(413):e2. https://www.nlm.nih.gov/pubs/techbull/nd16/nd16_mesh_browser_upgrade.html .

Tawfik GM, Dila KA, Mohamed MY, Tam DN, Kien ND, Ahmed AM, Huy NT. A step by step guide for conducting a systematic review and meta-analysis with simulation data. Trop Med Health. 2019;47(1):1–9.

Page MJ, McKenzie JE, Bossuyt PM, Boutron I, Hoffmann TC, Mulrow CD, et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. BMJ. 2021;372:n71. https://doi.org/10.1136/bmj.n71 .

Article PubMed PubMed Central Google Scholar

Ma LL, Wang YY, Yang ZH, Huang D, Weng H, Zeng XT. Methodological quality (risk of bias) assessment tools for primary and secondary medical studies: what are they and which is better? Military Med Res. 2020;7(1):1–1.

Higgins JP, Savović J, Page MJ, Elbers RG, Sterne JA. Assessing risk of bias in a randomized trial. Cochrane Handb Syst Rev Interv. 2019:205–28.

Deeks JJ, Dinnes J, D’Amico R, Sowden AJ, Sakarovitch C, Song F, Petticrew M, Altman DG. Evaluating non-randomised intervention studies. Health Technol Assess (Winchester, England). 2003;7(27):iii–173.

CAS Google Scholar

Sterne JAC, Hernán MA, Reeves BC, Savović J, Berkman ND, Viswanathan M, Henry D, Altman DG, Ansari MT, Boutron I, Carpenter JR, Chan AW, Churchill R, Deeks JJ, Hróbjartsson A, Kirkham J, Jüni P, Loke YK, Pigott TD, Ramsay CR, Regidor D, Rothstein HR, Sandhu L, Santaguida PL, Schünemann HJ, Shea B, Shrier I, Tugwell P, Turner L, Valentine JC, Waddington H, Waters E, Wells GA, Whiting PF, Higgins JPT. ROBINS-I: a tool for assessing risk of bias in non-randomized studies of interventions. BMJ. 2016;355:i4919. https://doi.org/10.1136/bmj.i4919 .

Downs SH, Black N. The feasibility of creating a checklist for the assessment of the methodological quality both of randomised and non-randomised studies of health care interventions. J Epidemiol Community Health. 1998;52(6):377–84.

Article CAS PubMed PubMed Central Google Scholar

Ahn E, Kang H. Introduction to systematic review and meta-analysis. Korean J Anesthesiol. 2018;71(2):103–12.

Lin L. Comparison of four heterogeneity measures for meta-analysis. J Eval Clin Pract. 2020;26(1):376–84.

Article PubMed Google Scholar

Mohan BP, Adler DG. Heterogeneity in systematic review and meta-analysis: how to read between the numbers. Gastrointest Endosc. 2019;89(4):902–3.

Schünemann HJ. GRADE: from grading the evidence to developing recommendations. A description of the system and a proposal regarding the transferability of the results of clinical research to clinical practice. Zeitschrift Evidenz, Fortbildung Qualitat Gesundheitswesen. 2009;103(6):391–400.

Taito S. The construct of certainty of evidence has not been disseminated to systematic reviews and clinical practice guidelines; response to ‘The GRADE Working Group’ et al. J Clin Epidemiol. 2022;147:171.

Download references

Acknowledgements

This research received no specific grant from any funding agency in the public, commercial, or not-for-profit sectors.

Author information

Authors and affiliations.

University of Western Australia, Stirling Hwy, Crawley, Perth, WA, 6009, Australia

Mayura Thilanka Iddagoda & Leon Flicker

Perioperative Service, Royal Perth Hospital, Wellington Street, Perth, WA, 6000, Australia

You can also search for this author in PubMed Google Scholar

Contributions

M.I. involved in conceptualization, literature search and writing the Article. L.F. reviewed and corrected contents. All authors reviewed the manuscript.

Corresponding author

Correspondence to Mayura Thilanka Iddagoda .

Ethics declarations

Ethics approval and consent to participate, consent for publication, competing interests.

The authors declare no competing interests.

Additional information

Publisher’s note.

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/ . The Creative Commons Public Domain Dedication waiver ( http://creativecommons.org/publicdomain/zero/1.0/ ) applies to the data made available in this article, unless otherwise stated in a credit line to the data.

Reprints and permissions

About this article

Cite this article.

Iddagoda, M.T., Flicker, L. Clinical systematic reviews – a brief overview. BMC Med Res Methodol 23 , 226 (2023). https://doi.org/10.1186/s12874-023-02047-8

Download citation

Received : 02 March 2023

Accepted : 27 September 2023

Published : 10 October 2023

DOI : https://doi.org/10.1186/s12874-023-02047-8

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Sytematic review

- Meta-analysis

- Risk of bias

- Certainty of evidence

BMC Medical Research Methodology

ISSN: 1471-2288

- General enquiries: [email protected]

- - Google Chrome

Intended for healthcare professionals

- Access provided by Google Indexer

- My email alerts

- BMA member login

- Username * Password * Forgot your log in details? Need to activate BMA Member Log In Log in via OpenAthens Log in via your institution

Search form

- Advanced search

- Search responses

- Search blogs

- The PRISMA 2020...

The PRISMA 2020 statement: an updated guideline for reporting systematic reviews

PRISMA 2020 explanation and elaboration: updated guidance and exemplars for reporting systematic reviews

- Related content

- Peer review

- Joanne E McKenzie , associate professor 1 ,

- Patrick M Bossuyt , professor 2 ,

- Isabelle Boutron , professor 3 ,

- Tammy C Hoffmann , professor 4 ,

- Cynthia D Mulrow , professor 5 ,

- Larissa Shamseer , doctoral student 6 ,

- Jennifer M Tetzlaff , research product specialist 7 ,

- Elie A Akl , professor 8 ,

- Sue E Brennan , senior research fellow 1 ,

- Roger Chou , professor 9 ,

- Julie Glanville , associate director 10 ,

- Jeremy M Grimshaw , professor 11 ,

- Asbjørn Hróbjartsson , professor 12 ,

- Manoj M Lalu , associate scientist and assistant professor 13 ,

- Tianjing Li , associate professor 14 ,

- Elizabeth W Loder , professor 15 ,

- Evan Mayo-Wilson , associate professor 16 ,

- Steve McDonald , senior research fellow 1 ,

- Luke A McGuinness , research associate 17 ,

- Lesley A Stewart , professor and director 18 ,

- James Thomas , professor 19 ,

- Andrea C Tricco , scientist and associate professor 20 ,

- Vivian A Welch , associate professor 21 ,

- Penny Whiting , associate professor 17 ,

- David Moher , director and professor 22

- 1 School of Public Health and Preventive Medicine, Monash University, Melbourne, Australia

- 2 Department of Clinical Epidemiology, Biostatistics and Bioinformatics, Amsterdam University Medical Centres, University of Amsterdam, Amsterdam, Netherlands

- 3 Université de Paris, Centre of Epidemiology and Statistics (CRESS), Inserm, F 75004 Paris, France

- 4 Institute for Evidence-Based Healthcare, Faculty of Health Sciences and Medicine, Bond University, Gold Coast, Australia

- 5 University of Texas Health Science Center at San Antonio, San Antonio, Texas, USA; Annals of Internal Medicine

- 6 Knowledge Translation Program, Li Ka Shing Knowledge Institute, Toronto, Canada; School of Epidemiology and Public Health, Faculty of Medicine, University of Ottawa, Ottawa, Canada

- 7 Evidence Partners, Ottawa, Canada

- 8 Clinical Research Institute, American University of Beirut, Beirut, Lebanon; Department of Health Research Methods, Evidence, and Impact, McMaster University, Hamilton, Ontario, Canada

- 9 Department of Medical Informatics and Clinical Epidemiology, Oregon Health & Science University, Portland, Oregon, USA

- 10 York Health Economics Consortium (YHEC Ltd), University of York, York, UK

- 11 Clinical Epidemiology Program, Ottawa Hospital Research Institute, Ottawa, Canada; School of Epidemiology and Public Health, University of Ottawa, Ottawa, Canada; Department of Medicine, University of Ottawa, Ottawa, Canada

- 12 Centre for Evidence-Based Medicine Odense (CEBMO) and Cochrane Denmark, Department of Clinical Research, University of Southern Denmark, Odense, Denmark; Open Patient data Exploratory Network (OPEN), Odense University Hospital, Odense, Denmark

- 13 Department of Anesthesiology and Pain Medicine, The Ottawa Hospital, Ottawa, Canada; Clinical Epidemiology Program, Blueprint Translational Research Group, Ottawa Hospital Research Institute, Ottawa, Canada; Regenerative Medicine Program, Ottawa Hospital Research Institute, Ottawa, Canada

- 14 Department of Ophthalmology, School of Medicine, University of Colorado Denver, Denver, Colorado, United States; Department of Epidemiology, Johns Hopkins Bloomberg School of Public Health, Baltimore, Maryland, USA